#FactCheck -Viral Video of Electric Car Powered by Generator Is AI-Generated

Executive Summary

A video circulating on social media shows an electric car allegedly being powered by a portable generator attached to it. The clip is being shared with the claim that the generator is directly running the vehicle, suggesting a groundbreaking or unusual technological feat. However, research conducted by the CyberPeace found the viral claim to be false. Our research revealed that the video is not authentic but AI-generated.

Claim

On February 22, 2026, a user on X (formerly Twitter) shared the viral video with the caption: “After watching this video, Newton might turn in his grave.” The post implied that the video demonstrates a scientific impossibility.

Fact Check:

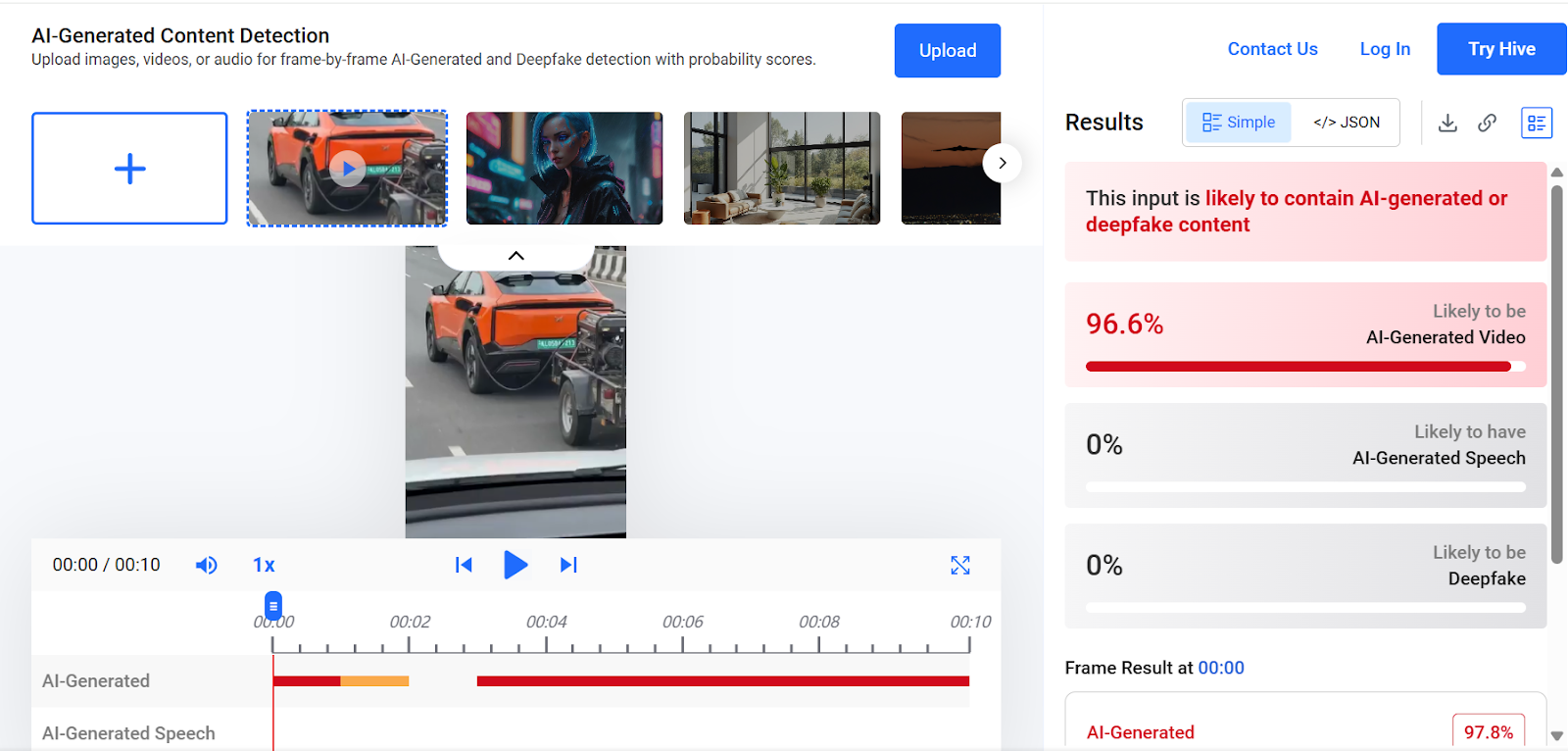

To verify the claim, we conducted a keyword search on Google. However, we found no credible reports from any reputable media organization supporting the assertion made in the viral post. A close examination of the video revealed several visual inconsistencies and unnatural elements, raising suspicion that the footage may have been generated using artificial intelligence. We then analyzed the video using the AI detection tool Hive Moderation. The results indicated a 96 percent probability that the video was AI-generated.

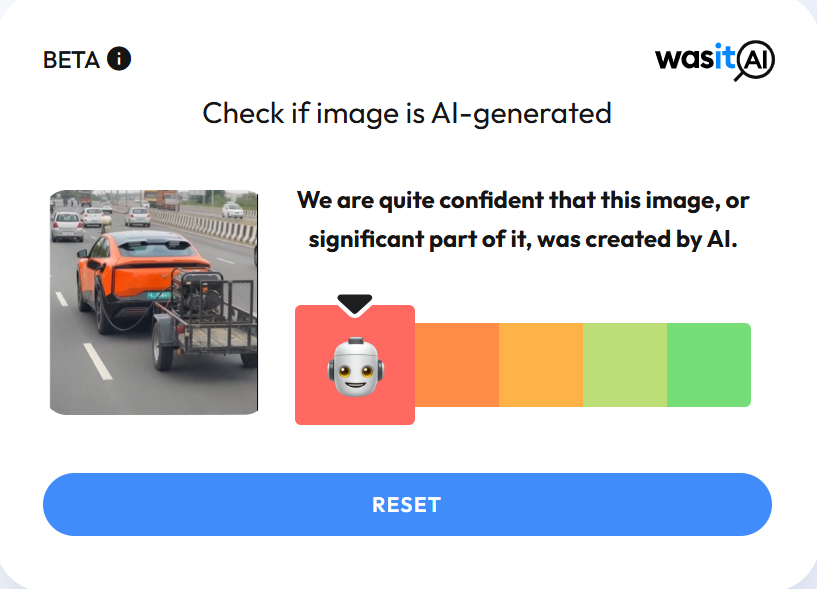

In the next step of our research , we scanned the video using another AI detection platform, WasItAI, which also concluded that the viral video was AI-generated.

Conclusion

Our research confirms that the viral video is not real. It has been artificially created using AI technology and is being circulated with a misleading claim.

Related Blogs

.webp)

Introduction

Cyber slavery has emerged as a serious menace. Offenders target innocent individuals, luring them with false promises of employment, only to capture them and subject them to horrific torture and forced labour. According to reports, hundreds of Indians have been imprisoned in 'Cyber Slavery' in certain Southeast Asian countries. Indians who have travelled to South Asian nations such as Cambodia in the hopes of finding work and establishing themselves have fallen victim to the illusion of internet slavery. According to reports, 30,000 Indians who travelled to this region on tourist visas between 2022 and 2024 did not return. India Today’s coverage demonstrated how survivors of cyber slavery who have somehow escaped and returned to India have talked about the terrifying experiences they had while being coerced into engaging in cyber slavery.

Tricked by a Job Offer, Trapped in Cyber Slavery

India Today aired testimonials of cyber slavery victims who described how they were trapped. One individual shared that he had applied for a well-paying job as an electrician in Cambodia through an agent in Delhi. However, upon arriving in Cambodia, he was offered a job with a Chinese company where he was forced to participate in cyber scam operations and online fraudulent activities.

He revealed that a personal system and mobile phone were provided, and they were compelled to cheat Indian individuals using these devices and commit cyber fraud. They were forced to work 12-hour shifts. After working there for several months, he repeatedly requested his agent to help him escape. In response, the Chinese group violently loaded him into a truck, assaulted him, and left him for dead on the side of the road. Despite this, he managed to survive. He contacted locals and eventually got in touch with his brother in India, and somehow, he managed to return home.

This case highlights how cyber-criminal groups deceive innocent individuals with the false promise of employment and then coerce them into committing cyber fraud against their own country. According to the Ministry of Home Affairs' Indian Cyber Crime Coordination Center (I4C), there has been a significant rise in cybercrimes targeting Indians, with approximately 45% of these cases originating from Southeast Asia.

CyberPeace Recommendations

Cyber slavery has developed as a serious problem, beginning with digital deception and progressing to physical torture and violent actions to commit fraudulent online acts. It is a serious issue that also violates human rights. The government has already taken note of the situation, and the Indian Cyber Crime Coordination Centre (I4C) is taking proactive steps to address it. It is important for netizens to exercise due care and caution, as awareness is the first line of defence. By remaining vigilant, they can oppose and detect the digital deceit of phony job opportunities in foreign nations and the manipulative techniques of scammers. Netizens can protect themselves from significant threats that could harm their lives by staying watchful and double-checking information from reliable sources.

References

- CyberPeace Highlights Cyber Slavery: A Serious Concern https://www.cyberpeace.org/resources/blogs/cyber-slavery-a-serious-concern

- https://www.indiatoday.in/india/story/india-today-operation-cyber-slaves-stories-of-golden-triangle-network-of-fake-job-offers-2642498-2024-11-29

- https://www.indiatoday.in/india/video/cyber-slavery-survivors-narrate-harrowing-accounts-of-torture-2642540-2024-11-29?utm_source=washare

Introduction

The recent inauguration of the Google Safety Engineering Centre (GSEC) in Hyderabad on 18th June, 2025, marks a pivotal moment not just for India, but for the entire Asia-Pacific region’s digital future. As only the fourth such centre in the world after Munich, Dublin, and Málaga, its presence signals a shift in how AI safety, cybersecurity, and digital trust are being decentralised, leading to a more globalised and inclusive tech ecosystem. India’s digitisation over the years has grown at a rapid scale, introducing millions of first-time internet users, who, depending on their awareness, are susceptible to online scams, phishing, deepfakes, and AI-driven fraud. The establishment of GSEC is not just about launching a facility but a step towards addressing AI readiness, user protection, and ecosystem resilience.

Building a Safer Digital Future in the Global South

The GSEC is set to operationalise the Google Safety Charter, designed around three core pillars: empowering users by protecting them from online fraud, strengthening government cybersecurity and enterprise, and advancing responsible AI in the platform design and execution. This represents a shift from the standard reactive safety responses to proactive, AI-driven risk mitigation. The goal is to make safety tools not only effective, but tailored to threats unique to the Global South, from multilingual phishing to financial fraud via unofficial lending apps. This centre is expected to stimulate regional cybersecurity ecosystems by creating jobs, fostering public-private partnerships, and enabling collaboration across academia, law enforcement, civil society, and startups. In doing so, it positions Asia-Pacific not as a consumer of the standard Western safety solutions but as an active contributor to the next generation of digital safeguards and customised solutions.

Previous piloted solutions by Google include DigiKavach, a real-time fraud detection framework, and tools like spam protection in mobile operating systems and app vetting mechanisms. What GSEC might aid with is the scaling and integration of these efforts into systems-level responses, where threat detection, safety warnings, and reporting mechanisms, etc., would ensure seamless coordination and response across platforms. This reimagines safety as a core design principle in India’s digital public infrastructure rather than focusing on attack-based response.

CyberPeace Insights

The launch aligns with events such as the AI Readiness Methodology Conference recently held in New Delhi, which brought together researchers, policymakers, and industry leaders to discuss ethical, secure, and inclusive AI implementation. As the world grapples with how to deal with AI technologies ranging from generative content to algorithmic decisions, centres like GSEC can play a critical role in defining the safeguards and governance structures that can support rapid innovation without compromising public trust and safety. The region’s experiences and innovations in AI governance must shape global norms, and the role of Tech firms in doing so is significant. Apart from this, efforts with respect to creating digital infrastructure and safety centres addressing their protection resonate with India’s vision of becoming a global leader in AI.

References

- https://www.thehindu.com/news/cities/Hyderabad/google-safety-engineering-centre-india-inaugurated-in-hyderabad/article69708279.ece

- https://www.businesstoday.in/technology/news/story/google-launches-safety-charter-to-secure-indias-ai-future-flags-online-fraud-and-cyber-threats-480718-2025-06-17?utm_source=recengine&utm_medium=web&referral=yes&utm_content=footerstrip-1&t_source=recengine&t_medium=web&t_content=footerstrip-1&t_psl=False

- https://blog.google/intl/en-in/partnering-indias-success-in-a-new-digital-paradigm/

- https://blog.google/intl/en-in/company-news/googles-safety-charter-for-indias-ai-led-transformation/

- https://economictimes.indiatimes.com/magazines/panache/google-rolls-out-hyderabad-hub-for-online-safety-launches-first-indian-google-safety-engineering-centre/articleshow/121928037.cms?from=mdr

About Customs Scam:

The Customs Scam is a type of fraud where the scammers pretend to be from the renowned courier office company (DTDC, etc.), or customs department or other government entities. They try to deceive the targets to transfer the money to resolve the fake customs related concerns. The Research Wing at CyberPeace along with the Research Wing of Autobot Infosec Private Ltd. delved into this case through Open Source Intelligence methods and undercover interactions with the scammers and concluded with some credible information.

Case Study:

The victim receives a phone call posing as a renowned courier office (DTDC, etc.) employee (in some case custom’s officer) that a parcel in the name of the victim has been taken into custody because of inappropriate content. The scammer provides the victim an employee ID, FIR number to prove the authenticity of the case and also they show empathy towards the victim. The scammer pretends to help the victim to connect with a police officer for further action. This so-called police officer shows transparency in his work. He asks him to join a skype video call and he even provides time to install the skype app. He instructs the victim to connect with the skype id provided by the fake police officer where the scammer created a fake police station environment. He also claims that he contacted the headquarters and the victim’s phone number is associated with many illegal activities to create panic to the victim. Then the scammers also ask the victim to give their personal details such as home address, office address, aadhar card number, PAN card number and screenshot of their bank accounts along with their available account balance for the sake of so-called investigation. Sometimes scammers also demand a high amount of money to resolve the issue and create fake urgency to trap the victim in making the payment. He sternly warns the victim not to contact any other police officials or professionals, making it clear that doing so would only lead to more trouble.

Analysis & Findings:

After receiving these kinds of complaints from multiple sources, the analysis was done on the collection of phone numbers from where the calls originated. These phone numbers were analysed for alias name, location, Telecom operator, etc. Further, we have verified the number to check whether the number is linked with any social media account on reputed platforms like Google, Facebook, Whatsapp, Twitter, Instagram, Linkedin, and other classified platforms such as Locanto.

- Phone Number Analysis: Each phone number looks authentic, cleverly concealing the fraud. Sometimes scammers use virtual/temporary phone numbers for these kinds of scams. In this case the victim was from Delhi, so the scammer posed themselves from Delhi Police station, while the phone numbers belong to a different place.

- Undercover Interactions: The interactions with the suspects reveals their chilling way of modus operandi. These scammers are masters of psychological manipulation. They threaten the victims and act as if they are genuine LEA officers.

- Exploitation Tactics: They target unsuspecting individuals and create fear and fake urgency among the targets to extract sensitive information such as Aadhaar, PAN card and bank account details.

- Fraud Execution: The scammers demand for the payment to resolve this issue and they make use of the stolen personally identifiable information. Once the victims transfer the money, the fraudsters cut off all the communication.

- Outcome for Victims: The scammers act so genuine and they frame the incidents so realistic, victims don't realise that they are trapped in this scam. They suffer severe financial loss and psychological trauma.

Recommendations:

- Verify Identities: It is important to verify the identity of any individual, especially if they demand personal information or payment. Contact the official agency directly using verified contact details to confirm the authenticity of the communication.

- Education on Personal Information: Provide education to people to protect their personal identity numbers like Aadhaar and PAN card number. Always emphasise the possible dangers connected to sharing such data in the course of phone conversations.

- Report Suspicious Activity: Prompt reporting of suspicious phone calls or messages to relevant authorities and consumer protection agencies helps in tracking down scammers and prevents people from falling. Report to https://cybercrime.gov.in or reach out to helpline@cyberpeace.net for further assistance.

- Enhanced Cybersecurity Measures: Implement robust cybersecurity measures to detect and mitigate phishing attempts and fraudulent activities. This includes monitoring and blocking suspicious phone numbers and IP addresses associated with scams.

Conclusion:

In the Customs Scam fraud, the scammers pretend to be a custom or any government official and sometimes threaten the targets to get the details such as Aadhaar, PAN card details, screenshot of their bank accounts along with their available balance in their account. The phone numbers used for these kinds of scams were analysed for any suspicious activity. It is found that all the phone numbers look authentic concealing the fraudentent activities. The interactions made with them reveals that they create fearness and urgency between the individuals. They act as if they are genuine officer’s and ask for money to resolve this issue. It is important to stay vigilant and not to share any personal or financial information. When facing these kinds of scams, report and spread awareness among individuals.