#FactCheck - Deepfake Video Falsely Claims visuals of a massive rally held in Manipur

Executive Summary:

A viral online video claims visuals of a massive rally organised in Manipur for stopping the violence in Manipur. However, the CyberPeace Research Team has confirmed that the video is a deep fake, created using AI technology to manipulate the crowd into existence. There is no original footage in connection to any similar protest. The claim that promotes the same is therefore, false and misleading.

Claims:

A viral post falsely claims of a massive rally held in Manipur.

Fact Check:

Upon receiving the viral posts, we conducted a Google Lens search on the keyframes of the video. We could not locate any authentic sources mentioning such event held recently or previously. The viral video exhibited signs of digital manipulation, prompting a deeper investigation.

We used AI detection tools, such as TrueMedia and Hive AI Detection tool, to analyze the video. The analysis confirmed with 99.7% confidence that the video was a deepfake. The tools identified "substantial evidence of manipulation," particularly in the crowd and colour gradience , which were found to be artificially generated.

Additionally, an extensive review of official statements and interviews with Manipur State officials revealed no mention of any such rally. No credible reports were found linking to such protests, further confirming the video’s inauthenticity.

Conclusion:

The viral video claims visuals of a massive rally held in Manipur. The research using various tools such as truemedia.org and other AI detection tools confirms that the video is manipulated using AI technology. Additionally, there is no information in any official sources. Thus, the CyberPeace Research Team confirms that the video was manipulated using AI technology, making the claim false and misleading.

- Claim: Massive rally held in Manipur against the ongoing violence viral on social media.

- Claimed on: Instagram and X(Formerly Twitter)

- Fact Check: False & Misleading

Related Blogs

Amid protests against rising inflation in Iran, a video is being widely shared on social media showing people gathering on streets at night while using mobile phone flashlights. The video is being circulated with the claim that it shows recent protests in Iran. Cyber Peace Foundation’s research found that the video being shared as visuals from the ongoing protests in Iran is not real. Our investigation revealed that the viral video is AI-generated and has no connection with actual events on the ground.

Claim

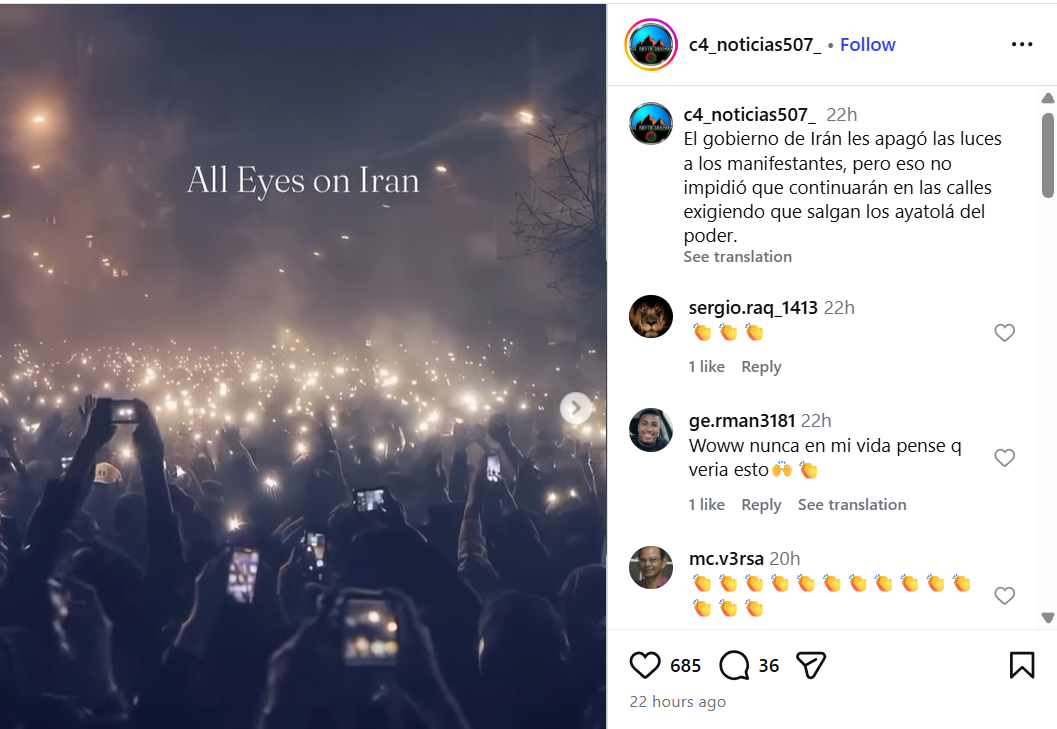

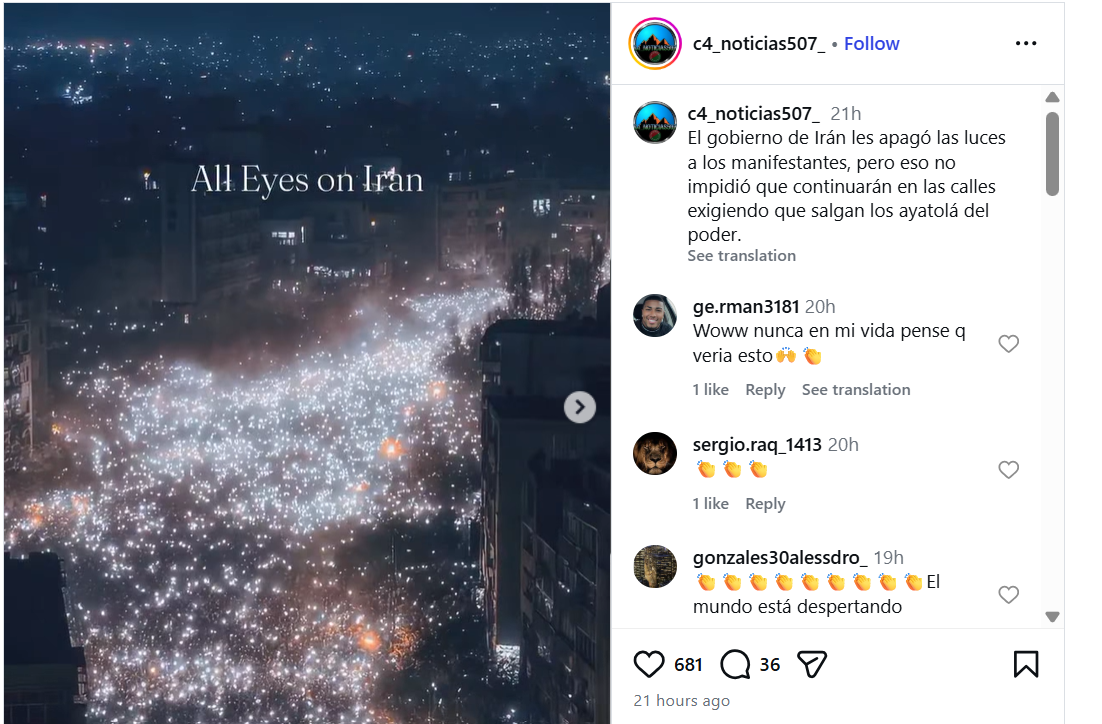

On January 11, 2026, an Instagram user shared the video with a caption written in Spanish. The Hindi translation of the caption reads: “The Iranian government shut down the lights of protesters, but that did not stop them from remaining on the streets demanding that the Ayatollahs step down from power.”The post link, its archived version, and screenshots can be seen below: https://www.instagram.com/p/DTXqzayjqFz/

FactCheck:

To verify the claim, we extracted keyframes from the viral video and conducted a Google reverse image search.During this process, we found the same video uploaded on Instagram on January 11, 2026. In that post, the user explicitly stated that the video was created using AI. The caption reads that the streetlights were turned off to hide the scale of protesters, but people used their phone lights to show their presence, adding:

“I created this video using AI, inspired by tonight’s protests (January 10, 2026) in Tehran, Iran.” Link to the post and screenshot can be seen below: https://www.instagram.com/p/DTWXsHajNvl/

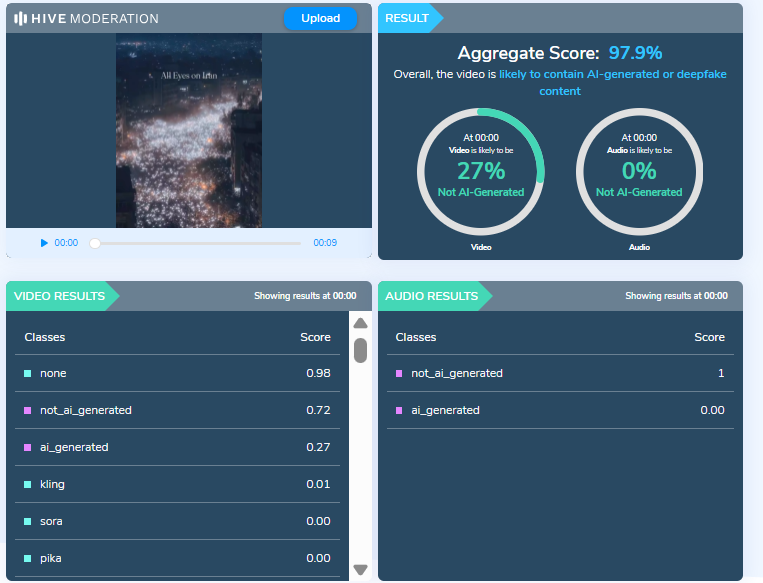

To further verify the authenticity of the video, we scanned it using multiple AI detection tools.Hive Moderation flagged the video as 97 percent AI-generated.

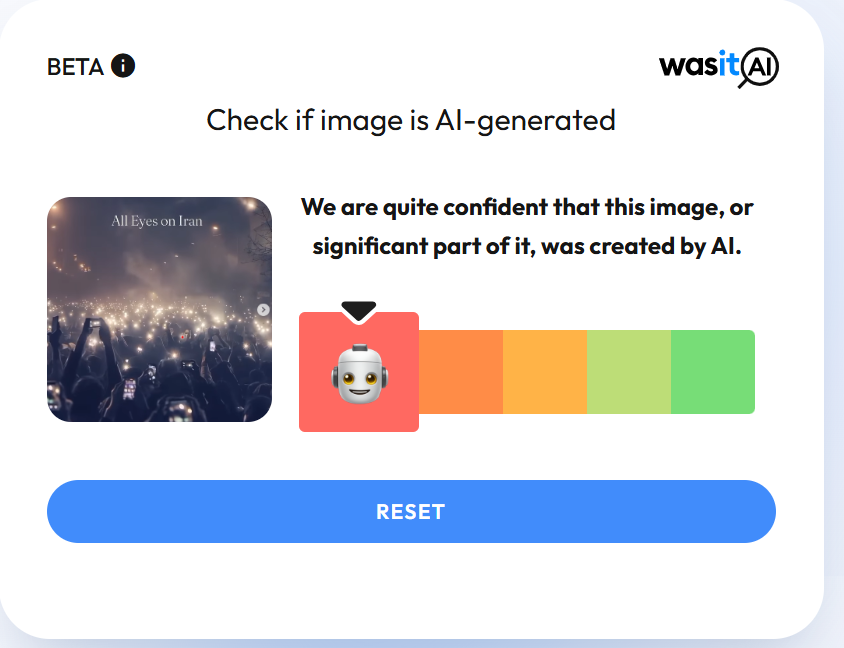

We also scanned the video using another AI detection tool, Wasitai, which likewise identified the video as AI-generated.

Conclusion

Our investigation confirms that the video being shared as footage from protests in Iran is not real. The viral video has been created using artificial intelligence and is being falsely linked to the ongoing protests. The claim circulating on social media is false and misleading.

Introduction:

This Op-ed sheds light on the perspectives of the US and China regarding cyber espionage. Additionally, it seeks to analyze China's response to the US accusation regarding cyber espionage.

What is Cyber espionage?

Cyber espionage or cyber spying is the act of obtaining personal, sensitive, or proprietary information from individuals without their knowledge or consent. In an increasingly transparent and technological society, the ability to control the private information an individual reveals on the Internet and the ability of others to access that information are a growing concern. This includes storage and retrieval of e-mail by third parties, social media, search engines, data mining, GPS tracking, the explosion of smartphone usage, and many other technology considerations. In the age of big data, there is a growing concern for privacy issues surrounding the storage and misuse of personal data and non-consensual mining of private information by companies, criminals, and governments.

Cyber espionage aims for economic, political, and technological gain. Fox example Stuxnet (2010) cyber-attack by the US and its allies Israel against Iran’s Nuclear facilities. Three espionage tools were discovered connected to Stuxnet, such as Gauss, FLAME and DuQu, for stealing data such as passwords, screenshots, Bluetooth, Skype functions, etc.

Cyber espionage is one of the most significant and intriguing international challenges globally. Many nations and international bodies, such as the US and China, have created their definitions and have always struggled over cyber espionage norms.

The US Perspective

In 2009, US officials (along with other allied countries) mentioned that cyber espionage was acceptable if it safeguarded national security, although they condemned economically motivated cyber espionage. Even the Director of National Intelligence said in 2013 that foreign intelligence capabilities cannot steal foreign companies' trade secrets to benefit their firms. This stance is consistent with the Economic Espionage Act (EEA) of 1996, particularly Section 1831, which prohibits economic espionage. This includes the theft of a trade secret that "will benefit any foreign government, foreign agent or foreign instrumentality.

Second, the US advocates for cybersecurity market standards and strongly opposes transferring personal data extracted from the US Office of Personnel Management (OPM) to cybercrime markets. Furthermore, China has been reported to sell OPM data on illicit markets. It became a grave concern for the US government when the Chinese government managed to acquire sensitive details of 22.1 million US government workers through cyber intrusions in 2014.

Third, Cyber-espionage is acceptable unless it’s utilized for Doxing, which involves disclosing personal information about someone online without their consent and using it as a tool for political influence operations. However, Western academics and scholars have endeavoured to distinguish between doxing and whistleblowing. They argue that whistleblowing, exemplified by events like the Snowden Leaks and Vault 7 disclosures, serves the interests of US citizens. In the US, being regarded as an open society, certain disclosures are not promoted but rather required by mandate.

Fourth, the US argues that there is no cyber espionage against critical infrastructure during peacetime. According to the US, there are 16 critical infrastructure sectors, including chemical, nuclear, energy, defence, food, water, and so on. These sectors are considered essential to the US, and any disruption or harm would impact security, national public health and national economic security.

The US concern regarding China’s cyber espionage

According to James Lewis (a senior vice president at the Center for US-China Economic and Security Review Commission), the US faces losses between $ 20 billion and $30 billion annually due to China’s cyberespionage. The 2018 U.S. Trade Representative (USTR) Section 301 report highlighted instances, where the Chinese government and executives from Chinese companies engaged in clandestine cyber intrusions to obtaining commercially valuable information from the U.S. businesses, such as in 2018 where officials from China’s Ministry of State Security, stole trade from General Electric aviation and other aerospace companies.

China's response to the US accusations of cyber espionage

China's perspective on cyber espionage is outlined by its 2014 anti-espionage law, which was revised in 2023. Article 1 of this legislation is formulated to prevent, halt, and punish espionage actions to maintain national security. Article 4 addresses the act of espionage and does not differentiate between state-sponsored cyber espionage for economic purposes and state-sponsored cyber espionage for national security purposes. However, China doesn't make a clear difference between government-to-government hacking (spying) and government-to-corporate sector hacking, unlike the US. This distinction is less apparent in China due to its strong state-owned enterprise (SOE) sector. However, military spying is considered part of the national interest in the US, while corporate spying is considered a crime.

China asserts that the US has established cyber norms concerning cyber espionage to normalize public attribution as acceptable conduct. This is achieved by targeting China for cyber operations, imposing sanctions on accused Chinese individuals, and making political accusations, such as blaming China and Russia for meddling in US elections. Despite all this, Washington D.C has never taken responsibility for the infamous Flame and Stuxnet cyber operations, which were widely recognized as part of a broader collaborative initiative known as Operation Olympic Games between the US and Israel. Additionally, the US takes the lead in surveillance activities conducted against China, Russia, German Chancellor Angela Merkel, the United Nations (UN) Secretary-General, and several French presidents. Surveillance programs such as Irritant Horn, Stellar Wind, Bvp47, the Hive, and PRISM are recognized as tools used by the US to monitor both allies and adversaries to maintain global hegemony.

China urges the US to cease its smear campaign associated with Volt Typhoon’s cyberattack for cyber espionage, citing the publication of a report titled “Volt Typhoon: A Conspiratorial Swindling Campaign Targets with U.S. Congress and Taxpayers Conducted by U.S. Intelligence Community” by China's National Computer Virus Emergency Response Centre and the 360 Digital Security Group on 15 April. According to the report, 'Volt Typhoon' is a ransomware cyber criminal group self-identified as the 'Dark Power' and is not affiliated with any state or region. Multiple cybersecurity authorities in the US collaborated to fabricate this story just for more budgets from Congress. In the meantime, Microsoft and other U.S. cybersecurity firms are seeking more big contracts from US cybersecurity authorities. The reality behind “Volt Typhoon '' is a conspiratorial swindling campaign to achieve two objectives by amplifying the "China threat theory" and cheating money from the U.S. Congress and taxpayers.

Beijing condemned the US claims of cyber espionage without any solid evidence. China also blames the US for economic espionage by citing the European Parliament report that the National Security Agency (NSA) was also involved in assisting Boeing in beating Airbus for a multi-billion dollar contract. Furthermore, Brazilian President Dilma Rousseff also accused the US authorities of spying against the state-owned oil company “Petrobras” for economic reasons.

Conclusion

In 2015, the US and China marked a milestone as both President Xi Jinping and Barack Obama signed an agreement, committing that neither country's government would conduct or knowingly support cyber-enabled theft of trade secrets, intellectual property, or other confidential business information to grant competitive advantages to firms or commercial sectors. However, the China Cybersecurity Industry Alliance (CCIA) published a report titled 'US Threats and Sabotage to the Security and Development of Global Cyberspace' in 2024, highlighting the US escalating cyber-attack and espionage activities against China and other nations. Additionally, there has been a considerable increase in the volume and sophistication of Chinese hacking since 2016. According to a survey by the Center for International and Strategic Studies, out of 224 cyber espionage incidents reported since 2000, 69% occurred after Xi assumed office. Therefore, China and the US must address cybersecurity issues through dialogue and cooperation, utilizing bilateral and multilateral agreements.

Executive Summary

A deepfake video is being widely circulated on social media with a false claim that External Affairs Minister S. Jaishankar admitted in a podcast interview that India was surprised by Pakistan’s counter-response during “Operation Sindoor” and suffered some losses. However, a fact-check by CyberPeace Research Wing has found the claim to be fake. The research shows that AI-generated audio has been used to misrepresent the External Affairs Minister’s remarks.

Claim

A Facebook user shared the viral video claiming that in a recent podcast with journalist Smita Prakash, Jaishankar admitted that Pakistan’s aggressive response during Operation Sindoor had caught India off guard.

Fact Check

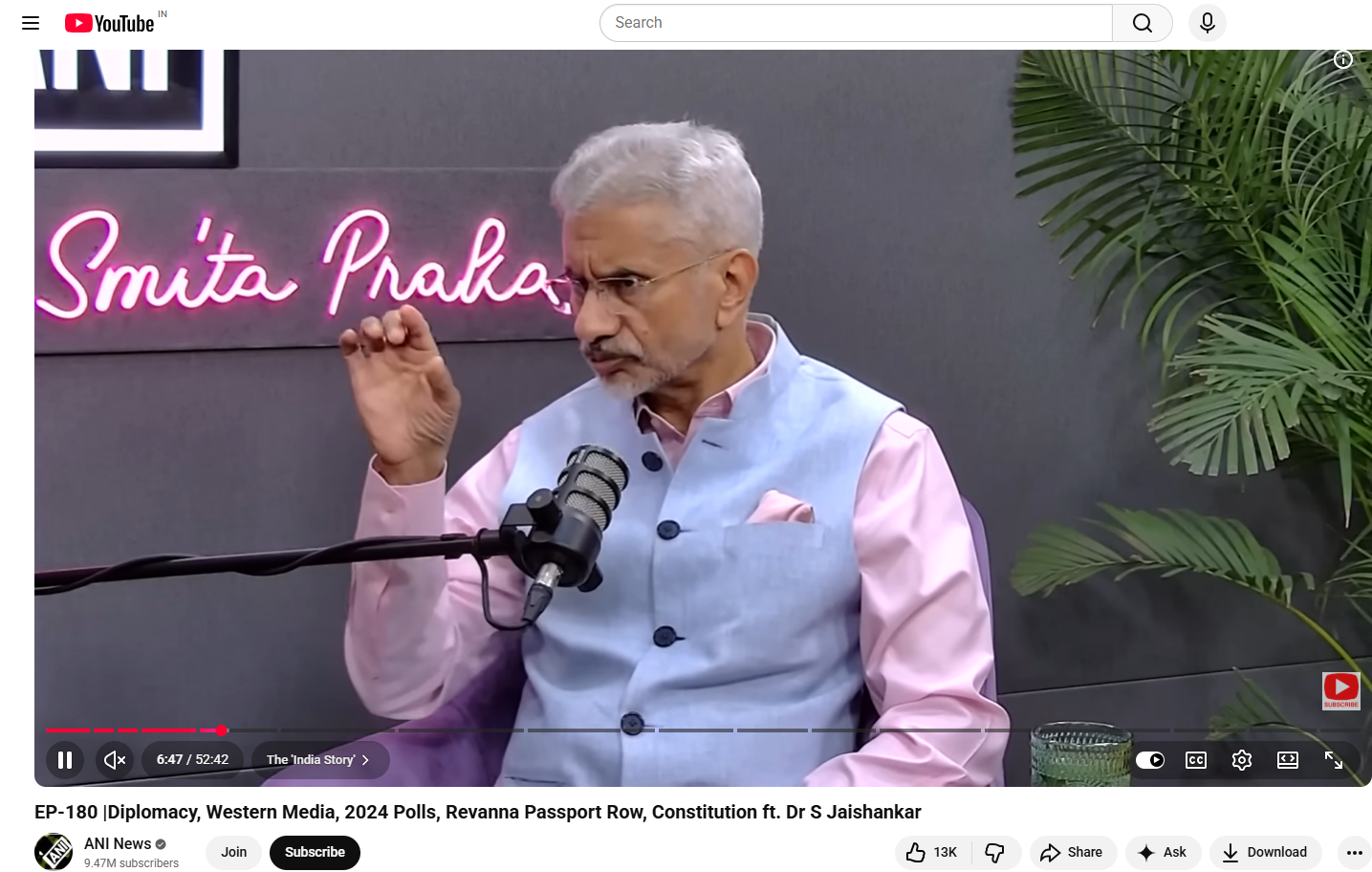

A review of the original interview on ANI’s YouTube channel shows that the conversation between Smita Prakash and S. Jaishankar was uploaded on May 24, 2024—well before Operation Sindoor.

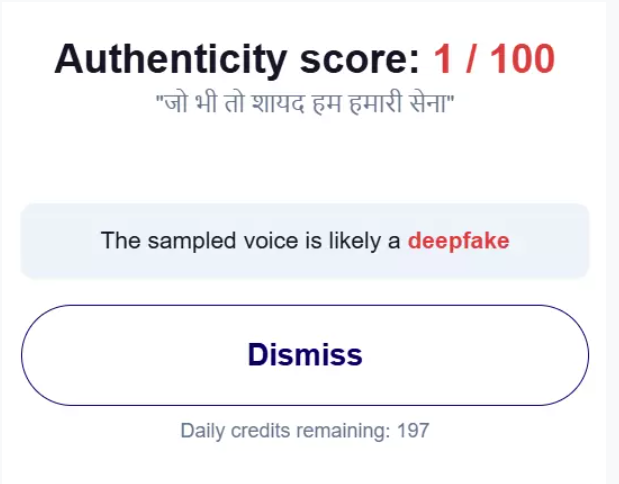

Operation Sindoor reportedly began on May 7, 2025. In the original video, there is no mention of Operation Sindoor or any Pakistani counter-response, making the viral claim baseless. Further analysis using AI detection tools such as Hive Moderation and Hiya indicated that the audio in the viral clip is likely AI-generated, suggesting manipulation of the original content.

Conclusion

The viral video is fake. AI-generated audio has been used to alter an old interview and falsely attribute statements to External Affairs Minister S. Jaishankar.