#FactCheck - Edited Video Falsely Attributed to Arnab Goswami; Claim of Remarks Against Prime Minister Modi Is Misleading

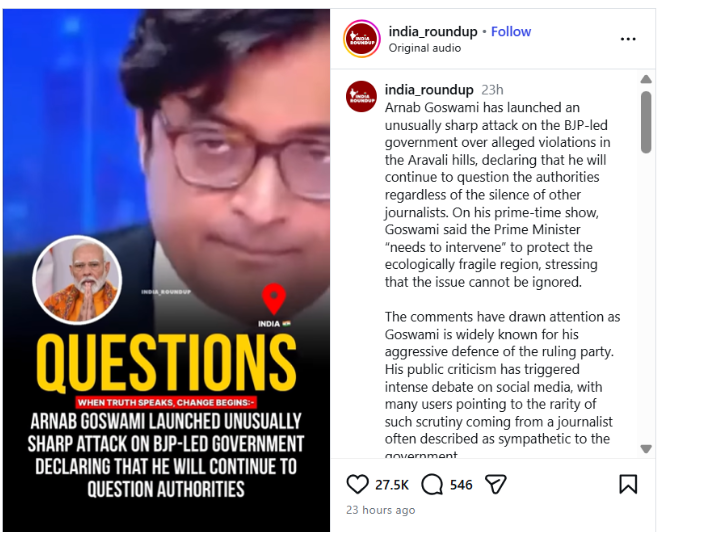

A video has been going viral on social media in recent days in which Republic TV’s Editor-in-Chief and anchor Arnab Goswami can allegedly be heard using objectionable language against Prime Minister Narendra Modi. While sharing the video, users are claiming that Arnab Goswami publicly made controversial remarks about the Prime Minister.

An investigation by CyberPeace Foundation found this claim to be completely false. Our probe revealed that the viral video is edited and is being circulated on social media with a misleading narrative. In the original video, Arnab Goswami was not making any personal statement; rather, he was referring to an old statement made by Congress leader Rahul Gandhi.

Viral Claim

An Instagram user posted this video on 5 January 2026. In the video, a voice resembling Arnab Goswami is heard saying, “Ye jo Narendra Modi hain, ye chhe mahine baad ghar se nahi nikal paayenge aur Hindustan ke log inhein danda maarenge.”

(Translation: “This Narendra Modi will not be able to step out of his house after six months, and the people of India will beat him with sticks.”)

The post link, its archive link, and screenshots can be seen below:

- Instagram link: https://www.instagram.com/reel/DTHrO7bk7Rf/?igsh=MThzbzBlcm82eWN0ZA%3D%3D

- Archive link: https://archive.ph/oaYsf

Fact Check

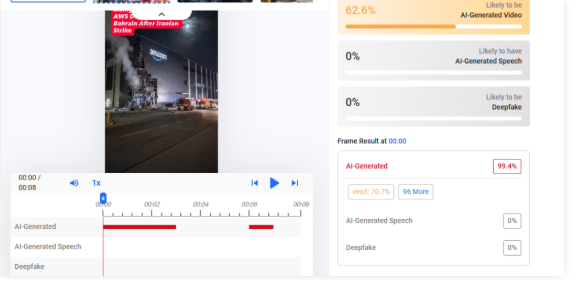

To verify the viral claim, we first examined the video using Google Lens search. During this process, we found a video published on 18 July 2024 on the official YouTube channel of Republic Bharat. The investigation revealed that this video is the longer (extended) version of the viral clip.

After carefully watching the full video, it became clear that Arnab Goswami was not making the statement himself. Instead, he was referring to a remark made by Congress leader Rahul Gandhi during the 2020 Delhi Assembly elections against Prime Minister Narendra Modi. This confirms that the viral video was clipped and presented out of context.

The related video link can be seen below: https://www.youtube.com/shorts/KlQV25-3l8s

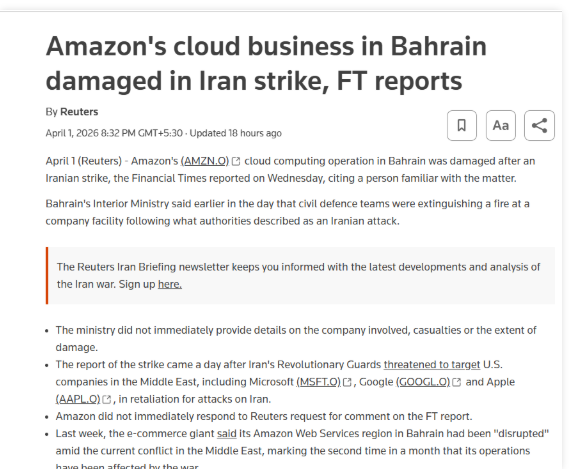

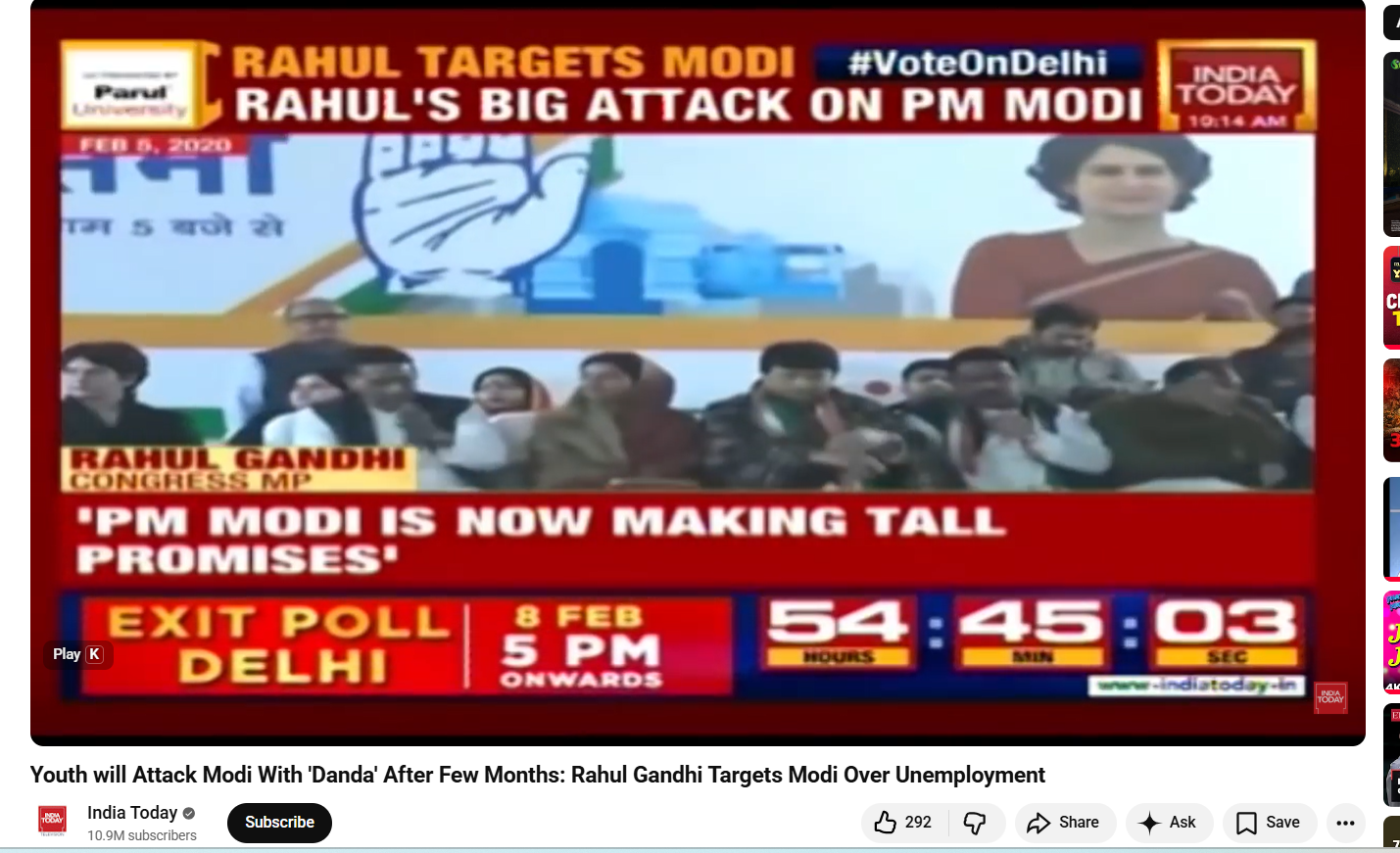

In the next step of the investigation, to verify whether Rahul Gandhi had indeed made such a statement, we conducted a customized keyword search on Google. During this, we found a video published on 6 February 2020 on the official YouTube channel of India Today.

In this video, recorded during a public event ahead of the 2020 Delhi Assembly elections, Rahul Gandhi is seen sharply attacking Prime Minister Narendra Modi, stating that if the Prime Minister fails to resolve the issue of unemployment in the country, the youth would beat him with sticks.

The video link is given below: https://www.youtube.com/watch?v=t5qCSA5nG9Y

Conclusion

The CyberPeace Foundation’s investigation found this claim to be completely fake. The viral video is edited and is being shared in a misleading context. In the original video, Arnab Goswami was referring to an old statement made by Rahul Gandhi, which was selectively clipped and presented in a way that falsely suggests Arnab Goswami himself made objectionable remarks against Prime Minister Narendra Modi.

.webp)