#FactCheck - Viral Video Showing Man Frying Bhature on His Stomach Is AI-Generated

A video circulating on social media shows a man allegedly rolling out bhature on his stomach and then frying them in a pan. The clip is being shared with a communal narrative, with users making derogatory remarks while falsely linking the act to a particular community.

CyberPeace Foundation’s research found the viral claim to be false. Our probe confirms that the video is not real but has been created using artificial intelligence (AI) tools and is being shared online with a misleading and communal angle.

Claim

On January 5, 2025, several users shared the viral video on social media platform X (formerly Twitter). One such post carried a communal caption suggesting that the person shown in the video does not belong to a particular community and making offensive remarks about hygiene and food practices..

- The post link and archived version can be viewed here: https://x.com/RightsForMuslim/status/2008035811804291381

- Archive Link: https://archive.ph/lKnX5

Fact Check:

Upon closely examining the viral video, several visual inconsistencies and unnatural movements were observed, raising suspicion about its authenticity. These anomalies are commonly associated with AI-generated or digitally manipulated content.

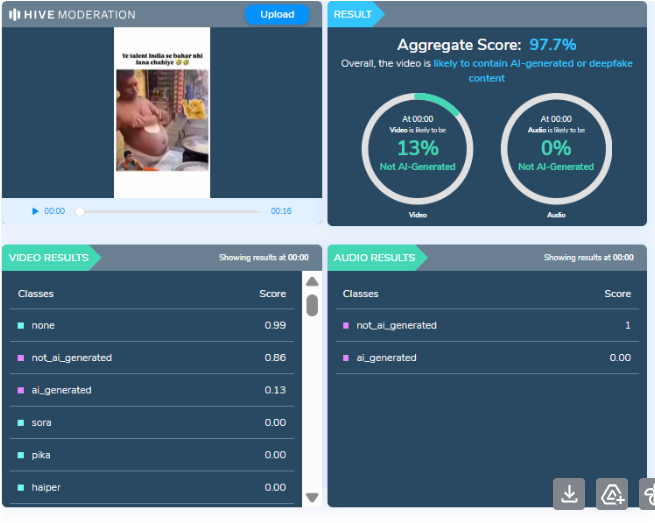

To verify this, the video was analysed using the AI detection tool HIVE Moderation. According to the tool’s results, the video was found to be 97 percent AI-generated, strongly indicating that it was not recorded in real life but synthetically created.

Conclusion

CyberPeace Foundation’s research clearly establishes that the viral video is AI-generated and does not depict a real incident. The clip is being deliberately shared with a false and communal narrative to mislead users and spread misinformation on social media. Users are advised to exercise caution and verify content before sharing such sensational and divisive material online.

Related Blogs

Introduction

As we navigate the digital realm that offers unlimited opportunities, it also exposes us to potential cyber threats and scams. A recent incident involving a businessman in Pune serves as a stark reminder of this reality. The victim fell prey to a sophisticated online impersonation fraud, where a cunning criminal posed as a high-ranking official from Hindustan Petroleum Corporation Limited (HPCL). This cautionary tale exposes the inner workings of the scam and highlights the critical need for constant vigilance in the virtual world.

Unveiling the scam

It all began with a phone call received by the victim, who lives in Taware Colony, Pune, on September 5, 2023. The caller, who identified himself as "Manish Pande, department head of HPCL," lured the victim by taking advantage of his online search for an LPG agency. With persuasive tactics, the fraudster claimed to be on the lookout for potential partners.

When a Pune man received a call on September 5, 2023. The caller, who introduced himself as “department head of HPCL”, was actually a cunning fraudster. It turns out, the victim had been searching for an LPG agency online, which the fraudster cleverly used to his advantage. In a twisted plot, the fraudster pretended to be looking for potential locations to establish a new LPG cylinder agency in Pune.

Enthralled by the illusion

The victim fell for the scam, convinced by the mere presence of "HPCL" in the bank account's name. Firstly victim transferred Rs 14,500 online as “registration fees”. Things got worse when, without suspicion, the victim obediently transferred Rs 1,48,200 on September 11 for a so-called "dealership certificate." To add to the charade of legitimacy, the fraudster even sent the victim registration and dealership certificates via email.

Adding to the deception, the fraudster, who had targeted the victim after discovering his online inquiry, requested photos of the victim's property and personal documents, including Aadhaar and PAN cards, educational certificates, and a cancelled cheque. These seemingly legitimate requests only served to reinforce the victim's belief in the scam.

The fraudster said they were looking for a place to allot a new LPG cylinder agency in Pune and would like to see if the victim’s place fits in their criteria. The victim agreed as it was a profitable business opportunity. The fraudster called the victim to “confirm” that his documents have been verified and assured that HPCL would be allotting him an LPG cylinder agency. On September 12, the fraudster again demanded a sum of money, this time for the issuance of an "HPCL license."

As the victim responded that he did not have the money, the fraudster insisted on an immediate payment of at least 50 per cent of the stipulated amount. So the victim transferred Rs 1,95,200 online. On the following day the 13th of September 2023, the fraudster asked the victim for the remaining amount. The victim said he would arrange the money in a few days. Meanwhile, on the same day, the victim went to the HPCL’s office in the Pune Camp area with the documents he had received through the emails. The HPCL employees confirmed these documents were fake, even though they looked very similar to the originals. The disclosure was a pivotal moment, causing the victim to fully comprehend the magnitude of the deceit and ultimately pursue further measures against the cybercriminal.

Best Practices

- Ensuring Caller Identity- Prioritize confirming the identity of anyone reaching out to you, especially when conducting financial transactions. Hold back from divulging confidential information until you have verified the credibility of the request.

- Utilize Official Channels- Communicate with businesses or governmental organizations through their verified contact details found on their official websites or trustworthy sources. Avoid solely relying on information gathered from online searches.

- Maintaining Skepticism with Unsolicited Communication- Exercise caution when approached by unexpected calls or emails, particularly those related to monetary transactions. Beware of manipulative tactics used by scammers to pressure swift decisions.

- Double-Check Information- To ensure accuracy, it is important to validate the information given by the caller on your own. This can be done by double-checking and cross-referencing the details with the official source. If you come across any suspicious activities, do not hesitate to report it to the proper authorities.

- Report Suspicious Activities- Reporting can aid in conducting investigations and providing assistance to the victim and also preventing similar incidents from occurring. It is crucially important to promptly report cyber crimes so law enforcement agencies can take appropriate action. A powerful resource available to victims of cybercrime is the National Cyber Crime Reporting Portal, equipped with a 24x7 helpline number, 1930. This portal serves as a centralized platform for reporting cybercrimes, including financial fraud.

Conclusion

This alarming event serves as a powerful wake-up call to the constant danger posed by online fraud. It is crucial for individuals to remain sceptical, diligently verifying the credibility of unsolicited contacts and steering clear of sharing personal information on the internet. As technology continues to evolve, so do the strategies of cyber criminals, heightening the need for users to stay on guard and knowledgeable in the complex digital world.

References:

- https://indianexpress.com/article/cities/pune/cybercriminal-posing-hindustan-petroleum-official-cheat-pune-man-9081057/

- https://www.timesnownews.com/mirror-now/crime/pune-man-duped-of-rs-3-5-lakh-by-cyber-fraudster-impersonating-hpcl-official-article-106253358

Introduction

The 2023-24 annual report of the Union Home Ministry states that WhatsApp is among the primary platforms being targeted for cyber fraud in India, followed by Telegram and Instagram. Cybercriminals have been conducting frauds like lending and investment scams, digital arrests, romance scams, job scams, online phishing etc., through these platforms, creating trauma for victims and overburdening law enforcement, which is not always the best equipped to recover their money. WhatsApp’s scale, end-to-end encryption, and ease of mass messaging make it both a powerful medium of communication and a vulnerable target for bad actors. It has over 500 million users in India, which makes it a primary subject for scammers running illegal lending apps, phishing schemes, and identity fraud.

Action Taken by Whatsapp

As a response to this worrying trend and in keeping with Rule 4(1)(d) of the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021, [updated as of 6.4.2023], WhatsApp has been banning millions of Indian accounts through automated tools, AI-based detection systems, and behaviour analysis, which can detect suspicious activity and misuse. In July 2021, it banned over 2 million accounts. By February 2025, this number had shot up to over 9.7 million, with 1.4 million accounts removed proactively, that is, before any user reported them. While this may mean that the number of attacks has increased, or WhatsApp’s detection systems have improved, or both, what it surely signals is the acknowledgement of a deeper, systemic challenge to India’s digital ecosystem and the growing scale and sophistication of cyber fraud, especially on encrypted platforms.

CyberPeace Insights

- Under Rule 4(1)(d) of the IT Rules, 2021, significant social media intermediaries (SSMIs) are required to implement automated tools to detect harmful content. But enforcement has been uneven. WhatsApp’s enforcement action demonstrates what effective compliance with proactive moderation can look like because of the scale and transparency of its actions.

- Platforms must treat fraud not just as a content violation but as a systemic abuse of the platform’s infrastructure.

- India is not alone in facing this challenge. The EU’s Digital Services Act (DSA), for instance, mandates large platforms to conduct regular risk assessments, maintain algorithmic transparency, and allow independent audits of their safety mechanisms. These steps go beyond just removing bad content by addressing the design of the platform itself. India can draw from this by codifying a baseline standard for fraud detection, requiring platforms to publish detailed transparency reports, and clarifying the legal expectations around proactive monitoring. Importantly, regulators must ensure this is done without compromising encryption or user privacy.

- WhatsApp’s efforts are part of a broader, emerging ecosystem of threat detection. The Indian Cyber Crime Coordination Centre (I4C) is now sharing threat intelligence with platforms like Google and Meta to help take down scam domains, malicious apps, and sponsored Facebook ads promoting illegal digital lending. This model of public-private intelligence collaboration should be institutionalized and scaled across sectors.

Conclusion: Turning Enforcement into Policy

WhatsApp’s mass account ban is not just about enforcement but an example of how platforms must evolve. As India becomes increasingly digital, it needs a forward-looking policy framework that supports proactive monitoring, ethical AI use, cross-platform coordination, and user safety. The digital safety of users in India and those around the world must be built into the architecture of the internet.

References

- https://scontent.xx.fbcdn.net/v/t39.8562-6/486805827_1197340372070566_282096906288453586_n.pdf?_nc_cat=104&ccb=1-7&_nc_sid=b8d81d&_nc_ohc=BRGwyxF87MgQ7kNvwHyyW8u&_nc_oc=AdnNG2wXIN5F-Pefw_FTt2T4K6POllUyKpO7nxwzCWxNgQEkVLllHmh81AHT2742dH8&_nc_zt=14&_nc_ht=scontent.xx&_nc_gid=iaQzNQ8nBZzxuIS4rXLOkQ&oh=00_AfEnbac47YDXvymJ5vTVB-gXteibjpbTjY5uhP_sMN9ouw&oe=67F95BF0

- https://scontent.xx.fbcdn.net/v/t39.8562-6/217535270_342765227288666_5007519467044742276_n.pdf?_nc_cat=110&ccb=1-7&_nc_sid=b8d81d&_nc_ohc=aj6og9xy5WQQ7kNvwG9Vzkd&_nc_oc=AdnDtVbrQuo4lm3isKg5O4cw5PHkp1MoMGATVpuAdOUUz-xyJQgWztGV1PBovGACQ9c&_nc_zt=14&_nc_ht=scontent.xx&_nc_gid=gabMfhEICh_gJFiN7vwzcA&oh=00_AfE7lXd9JJlEZCpD4pxW4OOc03BYcp1e3KqHKN9-kaPGMQ&oe=67FD6FD3

- https://www.hindustantimes.com/india-news/whatsapp-is-most-used-platform-for-cyber-crimes-home-ministry-report-101735719475701.html

- https://www.indiatoday.in/technology/news/story/whatsapp-bans-over-97-lakhs-indian-accounts-to-protect-users-from-scam-2702781-2025-04-02

The Expanding Governance Challenge of Artificial Intelligence

Artificial intelligence (AI) systems are increasingly embedded in economic and social infrastructure. They are being adopted in financial services, healthcare diagnostics, hiring systems, and public administration. But while these systems improve efficiency and decision-making, they also introduce new forms of technological risk.

Unlike conventional software, AI systems learn patterns from data and continue to evolve as they run. This poses governance issues since risks can arise throughout the AI life cycle, whether at the coding level or in their implementation.

The latest regulatory frameworks, such as the European Union’s AI Act (EU AI Act) and the UNESCO Recommendation on the Ethics of Artificial Intelligence, note that responsible AI governance depends on the realisation of where risks emerge across the development process.

This article maps the AI system lifecycle, identifies the risks that emerge at each stage and evaluates the policy tools used to mitigate them using the lifecycle framework developed by the Organisation of Economic Co-operation and Development (OECD).

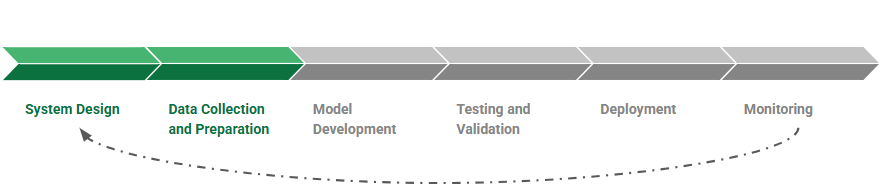

The Lifecycle of an AI System

AI systems are developed through a structured process that includes problem definition, dataset collection and preparation, model development, testing and validation, deployment, and monitoring.

The OECD conceptualises this development process as the AI system lifecycle. Each stage entails various technical and administrative procedures, since choices made during these stages will dictate the goals and limits of an AI system. Further, the quality and representativeness of training sets will have a strong effect on the behaviour of models after implementation.

Since this is an iterative and not a linear procedure, risks can be introduced at each stage of the AI lifecycle. New data can be retrained into different models, and systems are regularly updated once they have been deployed, to address performance degradation, model errors, or unintended outputs. This iterative process means governance must address risks across the entire lifecycle, not just at deployment.

Where AI Risks Emerge

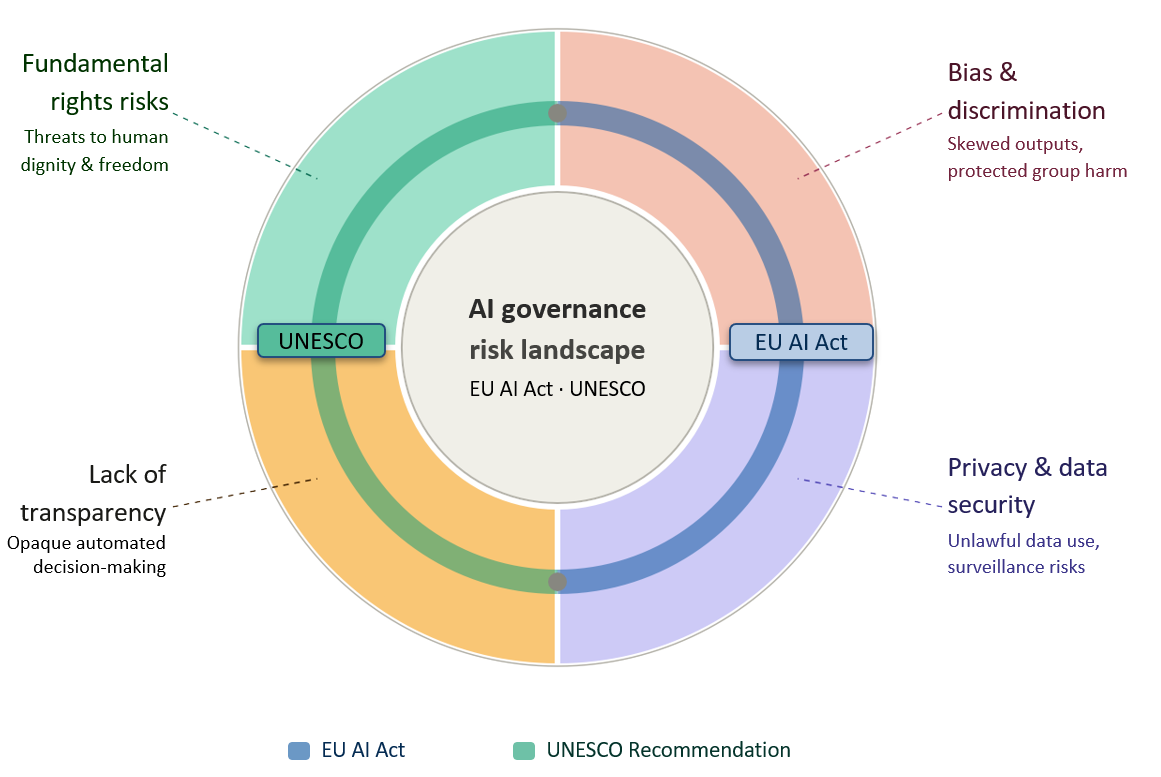

AI risks usually emerge earlier in the development process, especially in the phases when system objectives are formulated and training data are chosen. The EU AI Act and the UNESCO Recommendation on the Ethics of AI outline the following risks: bias and discrimination, privacy and data security violations, the absence of transparency in automated decision-making, and risks to fundamental rights.

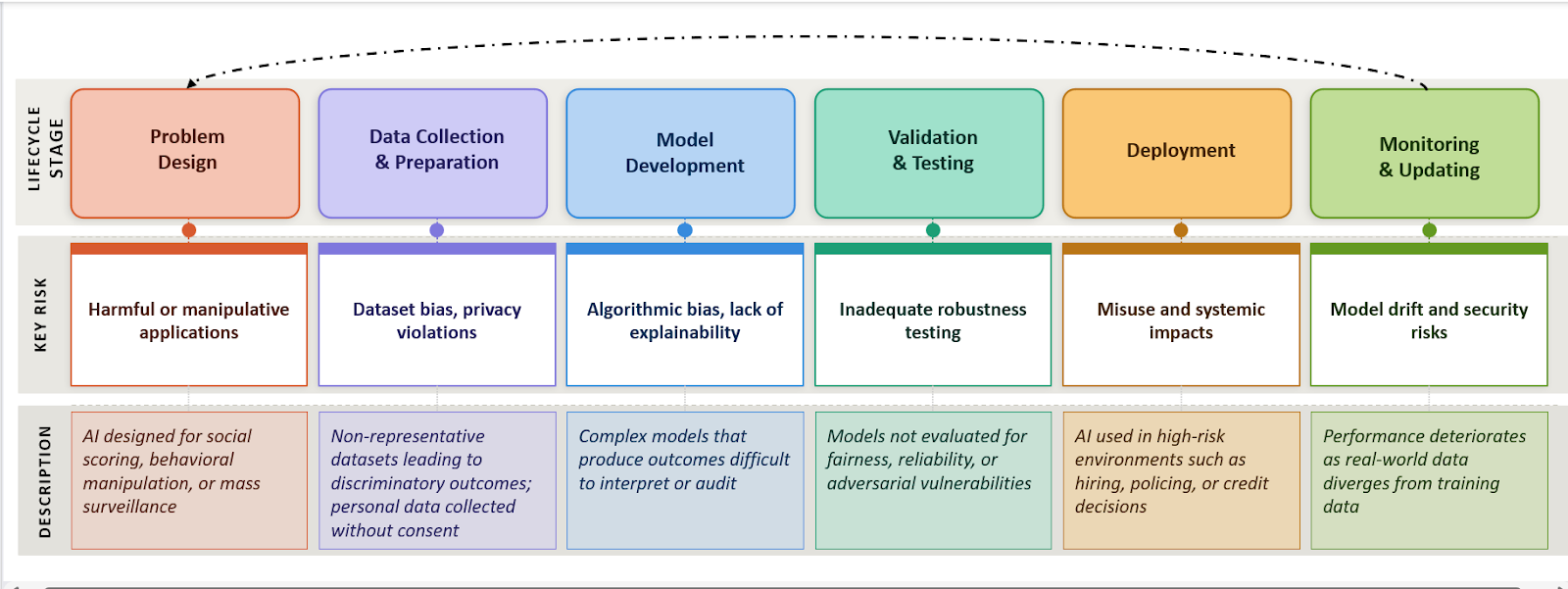

AI Governance Risk Landscape: Core Risk Categories Under International Frameworks

Risk categories jointly identified by the EU AI Act and UNESCO Recommendation on the Ethics of Artificial Intelligence

Outlining the risks throughout the AI lifecycle helps understand the areas where governance interventions are most necessary. For example, discriminatory outcomes often result from biased or unrepresentative training data, while safety failures are typically linked to inadequate testing before deployment. Risks such as misinformation arise post the development process, when generative AI systems are deployed at scale on digital platforms.

AI System Lifecycle: Key Risks at Each Stage

Risks identified per the EU AI Act and UNESCO Recommendation on the Ethics of AI

Understanding where risks emerge across the lifecycle explains why governance frameworks classify AI systems by risk and apply oversight at multiple stages.

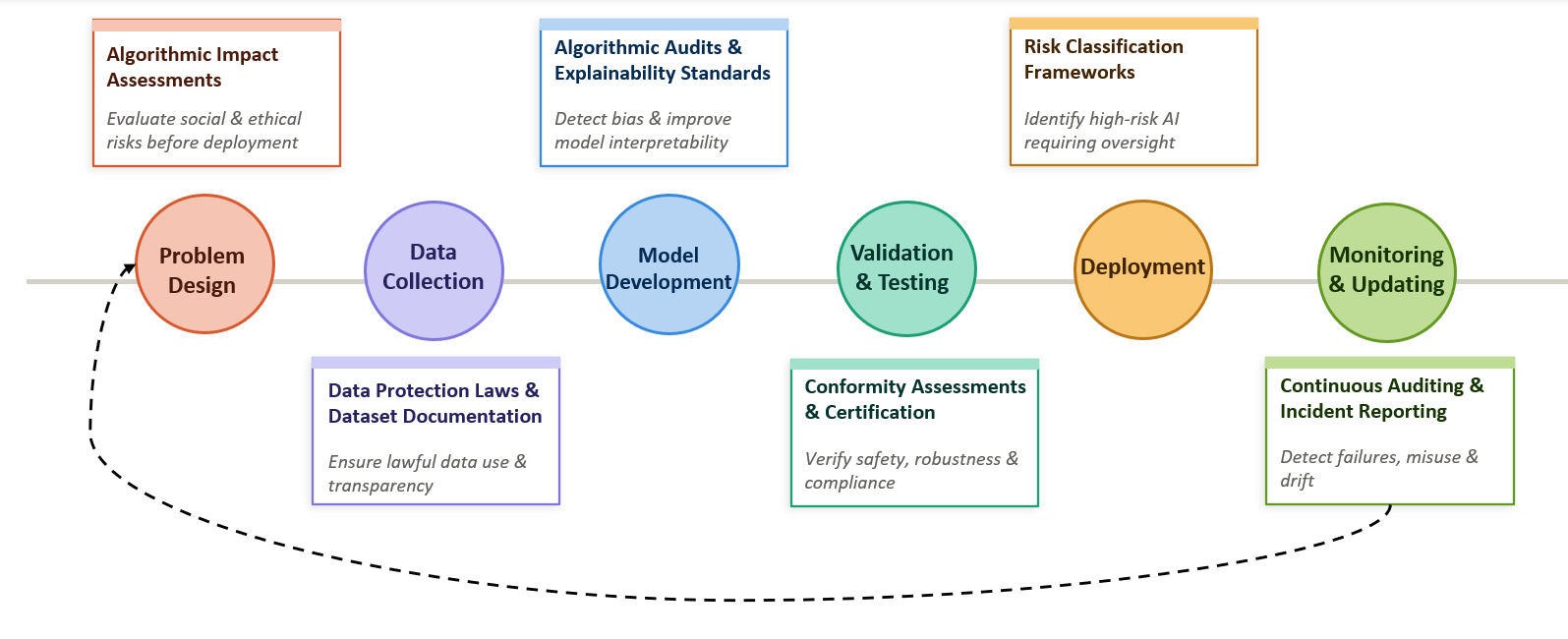

Policy Tools for Mitigating AI Risks

Governments and international organisations have developed regulatory tools to help mitigate AI risks in the lifecycle. These tools are meant to make sure that AI technologies are identified as up to standard in safety, accountability and fairness prior to and after deployment.

For example, the OECD AI Policy Observatory recommends that governments adopt policy instruments such as risk evaluations, algorithmic auditing necessities, regulatory sandboxes, and transparency necessities of AI systems. The European Union’s Artificial Intelligence Act (AI Act) is one of the most comprehensive systems of governance that introduces a risk-oriented regulation strategy. It mandates adherence to requirements concerning data governance, documentation, human oversight, and robustness, and cybersecurity. Such requirements bring regulatory checkpoints to the lifecycle of AI systems.

Mapping these policy tools across the lifecycle illustrates how governance mechanisms can intervene at different stages of AI development.

Governance Overlay: Policy Interventions Across the AI Lifecycle

Regulatory tools mapped at each stage of AI development per the EU AI Act and UNESCO Recommendation on the Ethics of AI

Several policy tools are directed at the risks that occur in the pre-developmental stages. In one example, algorithmic impact assessment has been applied in various jurisdictions to measure the possible consequences of automated decision systems on society before implementation. On the same note, the requirements of dataset documentation, including dataset transparency requirements and model cards, are aimed at enhancing accountability during the training and development stages of the AI systems. Therefore, lifecycle-based policy design allows regulators to intervene before harmful outcomes occur, rather than responding only after AI systems have caused damage in real-world environments.

The Policy Gap in AI Governance

The misalignment between risks and governance tools across the AI lifecycle indicates a critical structural gap in existing regulations. Numerous governance processes become activated after AI systems are classified as “high risk” or after they are implemented in the real world. But the most serious sources of damage have their roots in earlier stages of the development procedure.

An example is that prejudiced or unbalanced training data is almost inevitably a source of discriminative results in automated decision systems. When these types of models are applied in areas like staffing, credit rating, or in providing services to the public, such biases can quickly spread to large populations and undermine democratic rights. In the same way, the lack of transparency in model design might result in the fact that the regulator or individuals are affected by the decision-making process. This reflects a broader timing gap in AI governance, where risks originate during design and development, but regulatory intervention typically occurs only after deployment.

Analysis

1. Key risks originate before deployment: As depicted in the lifecycle mapping, the data collection and model development phase presents several significant governance risks as opposed to the deployment phase. Structural issues can be entrenched within AI systems even before they are deployed in practice due to bias in data sets, incomplete reporting of training sets, and obscured network designs.

2. Data governance is a primary point of vulnerability: Most of the instances of algorithmic discrimination listed above are associated with training material that is not representative of some population groups or is historical. Since machine learning models are optimisations of patterns that exist in datasets, these biases can be carried through the whole lifecycle and reproduced after deployment.

3. Regulatory approaches remain mismatched across jurisdictions: Different countries adopt varying approaches to AI governance, ranging from risk-based frameworks such as the EU AI Act to more sector-specific or voluntary guidelines in other regions. This divergence creates inconsistencies in safety, accountability, and enforcement standards, allowing risks to persist across borders and potentially undermining the protection of users in globally deployed AI systems.

4. Governance interventions remain uneven across the lifecycle: Whereas the various regulatory instruments aim at deployment and monitoring, fewer instruments systematically tackle the risks that are posed by the previous design and development phases.

Recommendations

1. Introduce mandatory lifecycle risk assessments: The regulatory systems need to demand systemic risk evaluation at the beginning of AI development, especially at the problem design and dataset selection phases. This would assist in detecting possible harmful applications in advance, before systems are constructed and installed.

2. Strengthen dataset governance standards: Training datasets must be supplemented with documentation as to their provenance, composition and limitations. Standardised documentation frameworks of data sets can assist in the discovery by regulators and auditors of the potential sources of bias or privacy threats.

3. Expand independent algorithmic auditing: AI systems can be assessed by regular third-party audits based on fairness, strength, and security weaknesses. The auditing mechanisms especially apply to high-risk systems employed in employment, finance or the public services.

4. Integrate continuous monitoring requirements: AI systems may be monitored regularly after implementation to identify model drift, unforeseen consequences, or abuse. Reporting systems can facilitate the process where the regulators can see the emerging risks and modify the governance systems.

Conclusion - The Need for Global AI Governance

Despite growing regulatory attention, global air governance remains fragmented. Different jurisdictions adopt varying approaches to risk classification, oversight, and enforcement, leading to inconsistencies in safety and accountability standards. Given that AI systems are often developed, deployed, and used across borders, this lack of coordination allows risks to persist beyond national regulatory frameworks.

Addressing these challenges requires a shift towards greater international cooperation and lifecycle-based governance. Developing shared standards, improving cross-border regulatory alignment, and embedding oversight across all stages of AI development will be essential to ensuring that AI systems are safe, transparent, and accountable in a globally interconnected environment.

References

- OECD AI lifecycle

- OECD AI system lifecycle description

- OECD AI governance lifecycle framework

- EU AI Act overview

- EU AI Act risk categories

- UNESCO Recommendation on the Ethics of AI

- AI governance lifecycle analysis

- OECD AI policy tools database