#FactCheck - Viral Video Claiming Snowfall Near Ambience Mall in Gurugram Is Misleading

A video circulating widely on social media claims to show snowfall near Ambience Mall in Gurugram, Haryana. The clip is being shared alongside assertions that Gurugram witnessed snowfall for the first time in its history amid a severe cold wave in January 2026. However, an research by Cyber Peace Foundation has found the claim to be misleading. Our verification reveals that the viral video is not recent and has been available online since March 2023.

The Claim

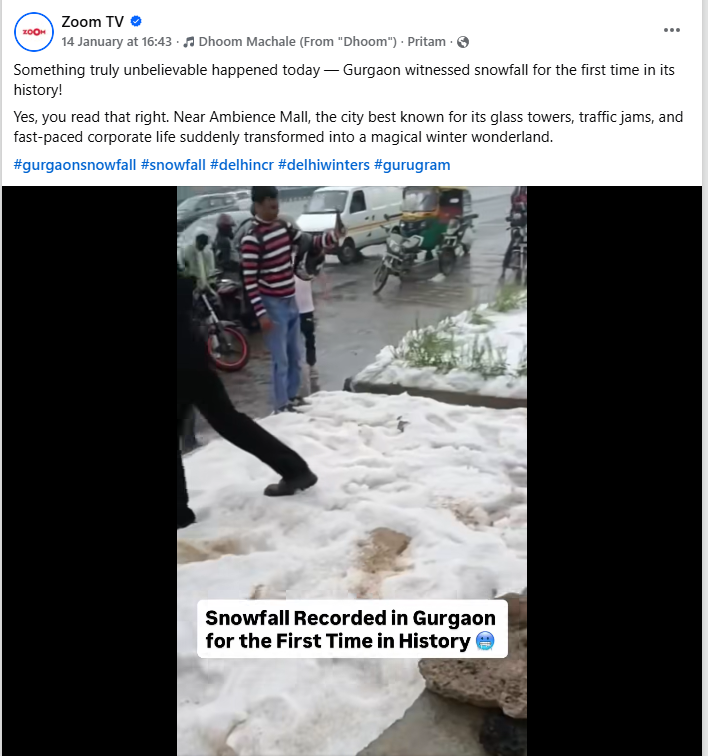

On 14 January 2026, a Facebook user shared the video with the caption,“Something truly unbelievable happened today — Gurgaon witnessed snowfall for the first time in its history!”Through this post, the user implied that the visuals showed snowfall near Ambience Mall during the ongoing cold wave.link and screeshot

- https://www.instagram.com/reel/DTfS9X9DyBo/?utm_source=ig_embed&ig_rid=239ddaf7-ec53-4b1d-8f3b-a5e39540b3ee

- https://archive.ph/JVjHf

Fact Check:

To verify the claim, we conducted a detailed search using relevant keywords but found no credible media reports or official statements to support it. Although Gurugram’s temperature dropped to 0.6 degrees Celsius amid an IMD-issued cold wave warning, there is no evidence to suggest that the city experienced snowfall or hail. As of January 16, 2026, weather records and official sources confirm that no such weather event occurred in Gurugram.

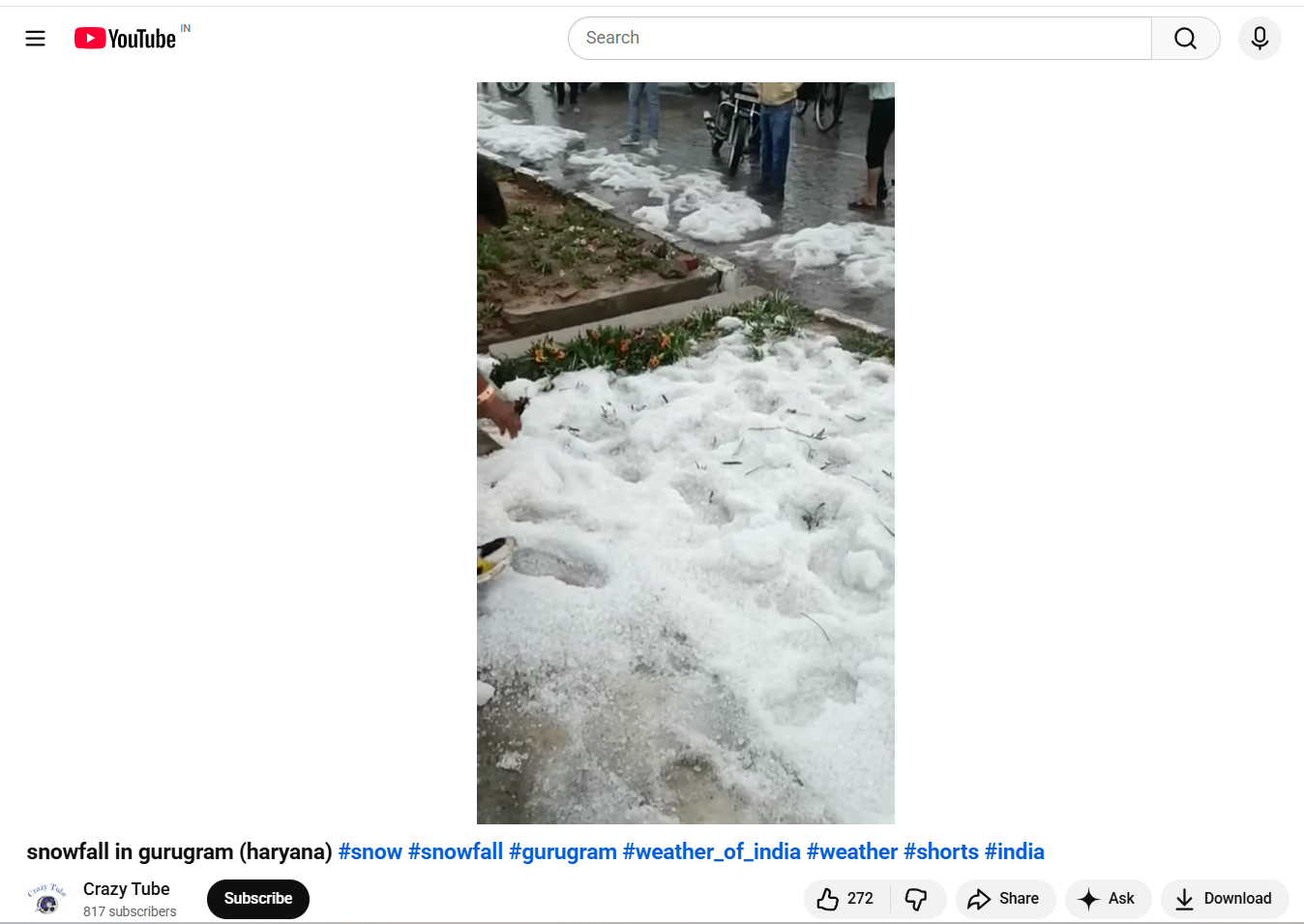

A reverse image search of keyframes extracted from the viral clip traced the same footage to a video uploaded on the YouTube channel Crazy Tube on March 21, 2023. This establishes that the video has been in circulation for nearly three years. In the original upload, the person filming clearly mentions that the visuals were recorded near a toll plaza, further indicating that the clip is unrelated to the recent weather conditions in Gurugram. Link and Screen Shot

We also came across an X (formerly Twitter) post from March 19, 2023, which featured images similar to those seen in the viral video. The post described the visuals as being from a hailstorm in Gurugram, indicating that the content predates the current weather conditions and is unrelated to the recent cold wave.

Conclusion:

The video is old and predates January 2026, and has been on the internet at least since March 2023. While Gurugram recorded a low of 0.6 degrees Celsius amid an IMD cold wave warning, the city did not experience snowfall or hail as of 16 January 2026. News reports from March 2023 confirm heavy rain and hail in Delhi and adjoining areas, including parts of Gurugram, but there is no evidence of snowfall in January 2026. Hence, the claim made in the post is MISLEADING.

Related Blogs

Executive Summary:

The rise in cybercrime targeting vulnerable individuals, particularly students and their families, has reached alarming levels. Impersonation scams, where fraudsters pose as Law Enforcement Officers, have become increasingly sophisticated, exploiting fear, urgency, and social stigma. This report delves into recent incidents of ransom scams involving fake CBI officers, highlighting the execution methods, psychological impact on victims, and preventive measures. The goal is to raise public awareness and equip individuals with the knowledge needed to protect themselves from such fraudulent activities.

Introduction:

Cybercriminals are evolving their tactics, with impersonation and social engineering at the forefront. Scams involving fake law enforcement officers have become rampant, preying on the fear of legal repercussions and the desire to protect loved ones. This report examines incidents where scammers impersonated CBI officers to extort money from families of students, emphasizing the urgent need for awareness, verification, and preventive measures.

Case Study:

This case study explains how the scammers impersonate themselves for the money targeting students' families.

Targets receive calls from scammers posing as CBI officers. Mostly the families of students are targeted by the fraudsters using sophisticated impersonation and emotional manipulation tactics. In our case study, the targets received calls from unknown international numbers, falsely claiming that the students, along with their friends, were involved in a fabricated rape case. The parents get calls during school or college hours, a time when it is particularly difficult and chaotic for parents to reach their children, adding to the panic and sense of urgency. The scammers manipulate the parents by stating that, due to the students' clean records, they are not officially arrested but would face severe legal consequences unless a sum of money is paid immediately.

Although in these specific cases, the parents did not pay the money, many parents in our country fall victim to such scams, paying large sums out of fear and desperation to protect their children’s futures. The fear of legal repercussions, social stigma, and the potential damage to the students' reputations, the scammers used high-pressure tactics to force compliance.

These incidents may result in significant financial losses, emotional trauma, and a profound loss of trust in communication channels and authorities. This underscores the urgent need for awareness, verification of authority, and prompt reporting of such scams to prevent further victimisation

Modus Operandi:

- Caller ID Spoofing: The scammer used a unknown number and spoofing techniques to mimic a legitimate law enforcement authority.

- Fear Induction: The fraudster played on the family's fear of social stigma, manipulating them into compliance through emotional blackmail.

Analysis:

Our research found that the unknown international numbers used in these scams are not real but are puppet numbers often used for prank calls and fraudulent activities. This incident also raises concerns about data breaches, as the scammers accurately recited students' details, including names and their parents' information, adding a layer of credibility and increasing the pressure on the victims. These incidents result in significant financial losses, emotional trauma, and a profound loss of trust in communication channels and authorities.

Impact on Victims:

- Financial and Psychological Losses: The family may face substantial financial losses, coupled with emotional and psychological distress.

- Loss of Trust in Authorities: Such scams undermine trust in official communication and law enforcement channels.

- Exploitation of Fear and Urgency: Scammers prey on emotions such as fear, urgency, and social stigma to manipulate victims.

- Sophisticated Impersonation Techniques: Using caller ID spoofing, Virtual/Temporary numbers and impersonation of Law Enforcement Officers adds credibility to the scam.

- Lack of Verification: Victims often do not verify the caller's identity, leading to successful scams.

- Significant Psychological Impact: Beyond financial losses, these scams cause lasting emotional trauma and distrust in institutions.

Recommendations:

- Cross-Verification: Always cross-verify with official sources before acting on such claims. Always contact official numbers listed on trusted Government websites to verify any claims made by callers posing as law enforcement.

- Promote Awareness: Educational institutions should conduct regular awareness programs to help students and families recognize and respond to scams.

- Encourage Prompt Reporting: Reporting such incidents to authorities can help track scammers and prevent future cases. Encourage victims to report incidents promptly to local authorities and cybercrime units.

- Enhance Public Awareness: Continuous public awareness campaigns are essential to educate people about the risks and signs of impersonation scams.

- Educational Outreach: Schools and colleges should include Cybersecurity awareness as part of their curriculum, focusing on identifying and responding to scams.

- Parental Guidance and Support: Parents should be encouraged to discuss online safety and scam tactics with their children regularly, fostering a vigilant mindset.

Conclusion:

The rise of impersonation scams targeting students and their families is a growing concern that demands immediate attention. By raising awareness, encouraging verification of claims, and promoting proactive reporting, we can protect vulnerable individuals from falling victim to these manipulative and harmful tactics. It is high time for the authorities, educational institutions, and the public to collaborate in combating these scams and safeguarding our communities. Strengthening data protection measures and enhancing public education on the importance of verifying claims can significantly reduce the impact of these fraudulent schemes and prevent further victimisation.

Introduction

The digital expanse of the metaverse has recently come under scrutiny following a gruesome incident. In a digital realm crafted for connection and exploration, a 16-year-old girl’s avatar falls victim to an agonising assault that kindled the fire of ethno-legal and societal discourse. The incident is a stark reminder that the cyberverse, offering endless possibilities and experiences, also has glaring challenges that require serious consideration. The incident involves a sixteen-year-old teen girl being raped through her digital avatar by a few members of Metaverse.

This incident has sparked a critical question of genuine psychological trauma inflicted by virtual experiences. The incident with a 16-year-old girl highlights the strong emotional repercussions caused by illicit virtual actions. While the physical realm remains unharmed, the digital assault can leave permanent scars on the psyche of the girl. This issue raises a critical question about the ethical implications of virtual interactions and the responsibilities of service providers to protect users' well-being on their platforms.

The Judicial Quagmire

The digital nature of these assaults gives impetus to complex jurisdictions which are profound in cyber offences. We are still novices in navigating the digital labyrinth where avatars have the ability to transcend borders with just a click of a mouse. The current legal structure is not equipped to tackle virtual crimes, calling for urgent reforms in critical legal structure. The Policymakers and legal Professionals must define virtual offenses first with clear and defined jurisdictional boundaries ensuring justice isn’t hampered due to geographical restrictions.

Meta’s Accountability

Meta, a platform where this gruesome incident occurred, finds itself at the crossroads of ethical dilemma. The company implemented plenty of safeguards that proved futile in preventing such harrowing acts. The incident has raised several questions about the broader role and responsibilities of tech juggernauts. Some of the questions demanding immediate answers as how a company can strike a balance between innovation and the protection of its users.

The Tightrope of Ethics

Metaverse is the epitome of innovation, yet this harrowing incident highlights a fundamental ethical contention. The real challenge is to harness the power of virtual reality while addressing the risks of digital hostilities. Society is still facing this conundrum, stakeholders must work in tandem to formulate robust and effective legal structures to protect the rights and well-being of users. This also includes balancing technological development and ethical challenges which require collective effort.

Reflections of Society

Beyond legal and ethical considerations, this act calls for wider societal reflections. It emphasises the pressing need for a cultural shift fostering empathy, digital civility and respect. As we tread deeper into the virtual realm, we must strive to cultivate ethos upholding dignity in both the digital and real world. This shift is only possible through awareness campaigns, educational initiatives and strong community engagement to foster a culture of respect and responsibility.

Safer and Ethical Way Forward

A multidimensional approach is essential to address the complicated challenges cyber violence poses. Several measures can pave the way for safer cyberspace for netizens.

- Legislative Reforms - There’s an urgent need to revamp legislative frameworks to mitigate and effectively address the complexities of these new and emerging virtual offences. The tech companies must collaborate with the government on formulating best practices and help develop standard security measures prioritising user protection.

- Public Awareness and Engagement - Initiating public awareness campaigns to educate users on crucial issues such as cyber resilience, ethics, digital detox and responsible online behaviour play a critical role in making netizens vigilant to avoid cyber hostilities and help fellow netizens in distress. Civil society organisations and think tanks such as CyberPeace Foundation are the pioneers of cyber safety campaigns in the country, working in tandem with governments across the globe to curb the evil of cyber hostilities.

- Interdisciplinary Research: The policymakers should delve deeper into the ethical, psychological and societal ramifications of digital interactions. The multidisciplinary approach in research is crucial for formulating policy based on evidence.

Conclusion

The digital Gang Rape is a wake-up call, demanding the bold measure to confront the intricate legal, societal and ethical pitfalls of the metaverse. As we navigate digital labyrinth, our collective decisions will help shape the metaverse's future. By nurturing the culture of empathy, responsibility and innovation, we can forge a path honouring the dignity of netizens, upholding ethical principles and fostering a vibrant and safe cyberverse. In this significant movement, ethical vigilance, diligence and active collaboration are indispensable.

References:

- https://www.thehindu.com/sci-tech/technology/virtual-gang-rape-reported-in-the-metaverse-probe-underway/article67705164.ece

- https://thesouthfirst.com/news/teen-uk-girl-virtually-gang-raped-in-metaverse-are-indian-laws-equipped-to-handle-similar-cases/

Introduction

" सर्वे भवन्तु सुखिनः, सर्वे सन्तु निरामयाः " May all be happy, may all be free from suffering. This timeless invocation reflects a vision of collective well-being, where progress is meaningful only when shared, and protection extends to every individual in society. This very philosophy lies at the heart of Corporate Social Responsibility, which seeks to ensure that growth is not isolated or unequal, but inclusive, ethical, and mindful of the broader social good.

At its core, Corporate Social Responsibility is not merely a statutory obligation, it is a reflection of a deeper ethical commitment, an acknowledgement that growth must carry with it a sense of duty towards society. In many ways, CSR embodies the idea that progress without responsibility is incomplete, and that corporations, as key actors shaping modern life, must help safeguard the very communities they engage with.

Reframing Digital Literacy Through Cyber Safety in CSR Frameworks

In India, this moral vision has been given a legal structure under the Companies Act, 2013, CSR Schedule VII, which mandates certain classes of companies to allocate a portion of their profits towards socially beneficial activities. Section 135 of the Act requires companies meeting specified financial thresholds to undertake CSR initiatives, guided by principles of inclusivity, sustainability, and social welfare. The underlying values are clear, CSR is intended not as charity, but as a strategic and accountable contribution to societal development.

Schedule VII of the Act further outlines the broad areas that qualify as CSR, including “Education and Digital Literacy”, gender equality, rural development, and measures for reducing inequalities. Within this framework, promoting “digital literacy” has increasingly been recognised as a legitimate and necessary CSR activity, especially in the context of a rapidly digitising society like India.

However, the current understanding of digital literacy within CSR remains incomplete. It often emphasises access and usage, teaching individuals how to navigate digital platforms, use devices, and engage with online services. What remains insufficiently addressed is the question of safety. In an environment where cyber fraud, data breaches, online harassment, and identity theft are becoming increasingly common, digital literacy without cyber awareness risks becoming a partial and potentially harmful intervention.

Embedding cyber awareness and capacity building within ‘digital literacy’ in explicit form is therefore not optional, it is essential. This includes equipping individuals with the ability to recognise online threats, protect personal data, understand digital consent, and respond effectively to cyber risks. It also requires recognising that vulnerable populations, including first-time internet users, women, and marginalised communities, often face disproportionate exposure to cyber harm.

“It is pertinent to note that Cybersecurity awareness training is relevant to CSR but is not yet consistently implemented as an explicit CSR activity. It is often included indirectly within digital literacy programs, highlighting the need for a more structured, progressive and integrated approach.”

Given this reality, there is a strong case for explicitly recognising cyber awareness as a distinct and integral component of CSR activities, rather than treating it as an implicit subset of digital literacy. Doing so would not only align CSR with contemporary societal risks but also ensure that corporate interventions move beyond enabling access to actively ensuring safety.

In a digital society, empowerment without protection is incomplete. If CSR is to truly reflect its foundational values, it must evolve to address not just the opportunities of the digital age, but also its risks.

Why Cyber Safety Must Be Central to CSR

The current state of digital ecosystems, which used to operate as secondary systems, now functions as essential systems that support government operations, banking systems, educational institutions, and social communication. The digital environment has its vulnerabilities, which create direct dangers for people in society. The elderly, first-time internet users, and rural communities face higher cyber threat risks because they often lack knowledge and protective resources on responsible use. The implementation of CSR initiatives that provide digital access to these groups, along with how to handle risks, will create greater benefit for their safety. Organisations must encourage the implementation of cyber safety training in their CSR programs because doing so will create value while fulfilling their ethical obligations. The empowerment process needs to achieve complete success, which protects people from any potential dangers according to the "do no harm" principle.

Key Components of CyberPeace-Aligned Digital Literacy

To make CSR initiatives more effective and future-ready, organisations should incorporate the following elements into their digital literacy programs:

- Cyber Awareness and Risk Recognition: The training program teaches participants how to recognise typical security threats, which include phishing attacks and scams, deepfake technology and misinformation.

- Data Protection and Privacy Literacy: The program teaches users how to protect their personal information, together with the process of giving consent and the methods used to handle their online presence.

- Responsible Digital Behaviour: The program teaches people how to use the internet responsibly by showing them how to make ethical decisions that require both respect and accountability while understanding the legal consequences of their actions.

- Incident Response and Reporting Mechanisms: The program teaches users about cyber incident response, which includes all reporting methods and available support resources.

- Inclusion-Focused Design: The program develops specific solutions which protect various demographic groups from their particular vulnerabilities while maintaining accessibility and essential programmatic relevance.

Policy and Institutional Alignment

The integration of cyber safety into corporate social responsibility lets organisations achieve their national objectives, which include:

- Strengthening digital trust and resilience

- Supporting safe digital inclusion initiatives

- Complementing the efforts of institutions working on cybersecurity awareness and capacity building

The structured approach requires organisations to execute three specific steps, which include:

- Partnering with cybersecurity organisations and civil society

- Developing standardised cyber awareness modules

- The organisation will use behavioural change indicators to evaluate its impact instead of relying on access metrics.

The Way Forward

Digital-era Corporate Social Responsibility needs to transition from its present state of providing access to digital resources toward establishing secure online platforms for users. The understanding of digital literacy needs to shift from its current status as a technical ability toward its new definition as a social competency that encompasses safety, responsibility and resilience training.

Companies need to understand their digital transformation obligations because their digital transformation efforts require them to handle all associated risks. The implementation of cyber safety within corporate social responsibility frameworks will enable organisations to develop a secure and trustworthy digital environment that includes all users.

Conclusion

The implementation of corporate social responsibility needs to fulfil its core mission of creating societal benefits through inclusive practices that span all current digital possibilities and their associated security threats. The field of digital literacy requires a new framework that combines digital safety practices with its existing educational materials.

The digital safety practice ensures that people obtain essential knowledge and skills that enable them to use digital resources securely when they access online content. The process of accomplishing shared community prosperity needs to establish a framework that benefits every person through social advancement and the protection of their rights.

References

- https://upload.indiacode.nic.in/schedulefile?aid=AC_CEN_22_29_00008_201318_1517807327856&rid=79

- https://www.allresearchjournal.com/archives/2025/vol11issue4/PartF/11-5-60-511.pdf

- https://www.unesco.org/en/dtc-finance-toolkit-factsheets/corporate-social-responsibility-csr

- https://www.investopedia.com/terms/c/corp-social-responsibility.asp

- https://digitalmarketinginstitute.com/blog/corporate-16-brands-doing-corporate-social-responsibility-successfully

- https://www.imd.org/blog/sustainability/csr-strategy/