Introduction

Embark on a groundbreaking exploration of the Darkweb Metaverse, a revolutionary fusion of the enigmatic dark web with the immersive realm of the metaverse. Unveiling a decentralised platform championing freedom of speech, the Darkverse promises unparalleled diversity of expression. However, as we delve into this digital frontier, we must tread cautiously, acknowledging the security risks and societal challenges that accompany the metaverse's emergence.

The Dark Metaverse is a unique combination of the mysterious dark web and the immersive digital world known as the metaverse. Imagine a place where users may participate in decentralised social networking, communicate anonymously, and freely express a range of viewpoints. It aims to provide an alternative to traditional online platforms, emphasizing privacy and freedom of speech. Nevertheless, it also brings new kinds of criminality and security issues, so it's important to approach this digital frontier cautiously.

In the vast expanse of the digital cosmos, there exists a realm that remains shrouded in mystery to the casual netizen—the dark web. It is a place where the surface web, the familiar territory of Google searches and social media feeds, constitutes a mere 5 per cent of the information iceberg floating in an ocean of data. Beneath this surface lies the deep web and the dark web, comprising the remaining 95 per cent, a staggering figure that beckons the brave and curious to explore its abysmal depths.

Imagine, a platform that not only ventures into these depths but intertwines them with the emerging concept of the metaverse—a digital realm that defeats the limitations of the physical world. This is the vision of the Darkweb Metaverse, the world’s premier endeavour to harness the enigmatic depths of the dark web and fuse it into the immersive experience of the metaverse.

As per Internet User Statistics 2024, There are over 5.3 billion Internet users in the world, meaning over 65% of the world’s population has access to the Internet. The Internet is used for various services. News, entertainment, and communication to name a few. The citizens of developed countries depend on the World Wide Web for a multitude of daily tasks such as academic research, online shopping, E-banking, accessing news and even ordering food online hence the Internet has become an integral part of our daily lives.

Surface Web

This layer of the internet is used by the general public on a daily basis. The contents of this layer are accessed by standard web browsers namely Google Chrome, and Mozilla Firefox to name a few. The contents of this layer of the internet are indexed by these search engines.

Deep Web

This is the second layer of the internet; its contents are not indexed by search engines. The content that is unavailable on the surface web is considered to be a part of the deep web. The deep web comprises a collection of various types of confidential information. Several Schools, Universities, Institutes, Government Offices and Departments, Multinational Companies (MNCs), and Private Companies store their database information and website-oriented server information such as online profile and accounts usernames or IDs and passwords or log in credentials and companies' premium subscription data and monetary transactional records in the Intra-net which is part of the deep web.

Dark Web

It is the least explored part of the internet which is considered to be a hub of various bizarre activities. The contents of the dark web are not indexed by search engines and specific software is required to access this layer of the internet namely TOR (The Onion Router) browser which cloaks to identify its users making them anonymous. The websites of the dark web are identified from .onion TLD (Top Level Domain). Due to anonymity provided in this layer, various criminal activities take place over there including Drugs trading, Arms trading, and Illegal PayPal account details to websites offering child pornography.

The Darkverse

The Darkweb Metaverse is not a mere novelty; it is a revolutionary step forward, a decentralised social networking platform that stands in stark contrast to centralised counterparts like YouTube or Twitter. Here, the spectre of censorship is banished, and the freedom of speech reigns supreme.

The architectonic prowess behind the Darkweb Metaverse is formidable. The development team is a coalition of former infrastructure maestros from Theta Network and virtuosos of metaverse design, bolstered by backend engineers from Gensokishi Metaverse. At the helm is a CEO whose tenure at the apex of large Japanese companies has endowed him with a profound understanding of the landscape, setting a solid foundation for the platform's future triumphs.

Financially, the dark web has been a flourishing underworld, with revenues ranging from $1.5 billion to $3.1 billion between 2020 and 2022. Darkverse, with its emphasis on user-friendliness and safety, is poised to capture a significant portion of this user base. The platform serves as a truly decentralised amalgamation of the Dark Web, Metaverse, and Social Networking Services (SNS), with a mission to provide an unassailable bastion for freedom of speech and expression.

The Darkweb Metaverse is not merely a sanctuary for anonymity and privacy; it is a crucible for the diversity of expression. In a world where centralised platforms can muzzle voices, Darkverse stands as a bulwark against such suppression, fostering a community where a kaleidoscope of opinions and information thrives. The ease of use is unparalleled—a one-time portal that obviates the need for third-party software to access the dark web, protecting users from the myriad risks that typically accompany such ventures.

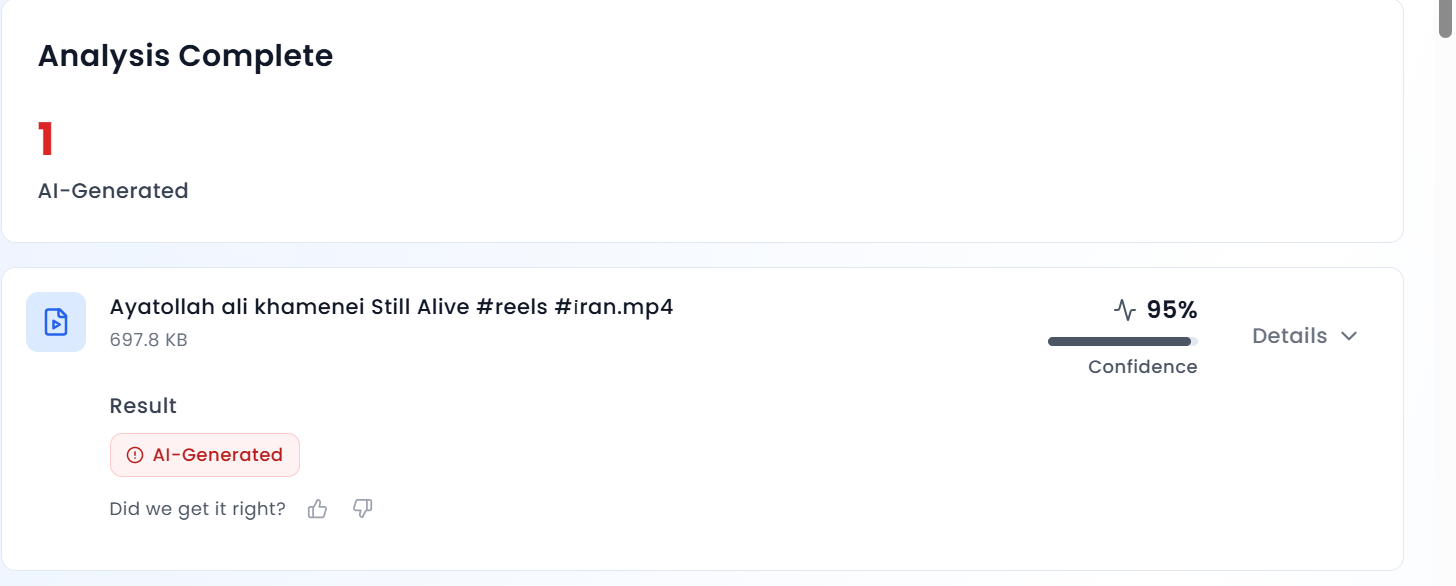

Moreover, the platform's ability to verify the authenticity of information is a game-changer. In an era laced with misinformation, especially surrounding contentious issues like war, Darkverse offers a sign of truth where the source of information can be scrutinised for its accuracy.

Integrating Technologies

The metaverse will be an immersive iteration of the internet, decked with interactive features of emerging technologies such as artificial intelligence, virtual and augmented reality, 3D graphics, 5G, holograms, NFTs, blockchain and haptic sensors. Each building block, while innovative, carries its own set of risks—vulnerabilities and design flaws that could pose a serious threat to the integrated meta world.

The dark web's very nature of interaction through avatars makes it a perfect candidate for a metaverse iteration. Here, in this anonymous world, commercial and personal engagements occur without the desire to unveil real identities. The metaverse's DNA is well-suited to the dark web, presenting a formidable security challenge as it is likely to evolve more rapidly than its real-world counterpart.

While Meta (formerly Facebook) is a prominent entity developing the metaverse, other key players include NVIDIA, Epic Games, Microsoft, Apple, Decentraland, Roblox Corporation, Unity Software, Snapchat, and Amazon. These companies are integral to constructing the vast network of real-time 3D virtual worlds where users maintain their identities and payment histories.

Yet, with innovation comes risk. The metaverse will necessitate police stations, not as a dystopian oversight but as a means to address the inherent challenges of a new digital society. In India, for instance, the integration of law enforcement within the metaverse could revolutionize the public's interaction with the police, potentially increasing the reporting of crimes.

The Perils within the Darkverse

The metaverse will also be a fertile ground for crimes of a new dimension—identity theft, digital asset hijacking, and the influence of metaverse interactions on real-world decisions. With a significant portion of social media profiles potentially being fraudulent, the metaverse amplifies these challenges, necessitating robust identity access management.

The integration of NFTs into the metaverse ecosystem is not without its security concerns, as token breaches and hacks remain a persistent threat. The metaverse's parallel economy will test the developers' ability to engender trust, a Herculean task that will challenge the boundaries of national economies.

Moreover, the metaverse will be a crucible for social engineering-based attacks, where the real-time and immersive nature of interactions could make individuals particularly vulnerable to deception and manipulation. The potential for early-stage fraud, such as the hyping and selling of virtual assets at unrealistic prices, is a stark reality.

The metaverse also presents numerous risks, particularly for children and adolescents who may struggle to distinguish between virtual and real worlds. The implications of such immersive experiences are intense, with the potential to influence behaviour in hazardous ways.

Security risks extend to the technologies supporting the metaverse, such as virtual and augmented reality. The exploitation of biometric data, the bridging of virtual and real worlds, and the tendency for polarisation and societal isolation are all issues requiring immediate attention.

A Way Forward

As we stand on the cusp of this new digital frontier, it is evident that the metaverse, despite its reliance on blockchain, is not immune to the privacy and security breaches that have plagued conventional IT infrastructure. Data security, Identity theft, network security, and ransomware attacks are just a few of the challenges on the way.

In this quest into the unknown, the Darkweb Metaverse radiates with the promise of freedom and the thrill of discovery. Yet, as we navigate these shadowy depths, we must remain vigilant, for the very technologies that empower us also rear the seeds of our grim vulnerabilities. The metaverse is not just a new chapter in the story of the internet—it is a whole narrative, one that we must write with caution and care.

References