#FactCheck! Viral Image Claiming Virat Kohli and Rohit Sharma Visited Kedarnath Is AI-Generated

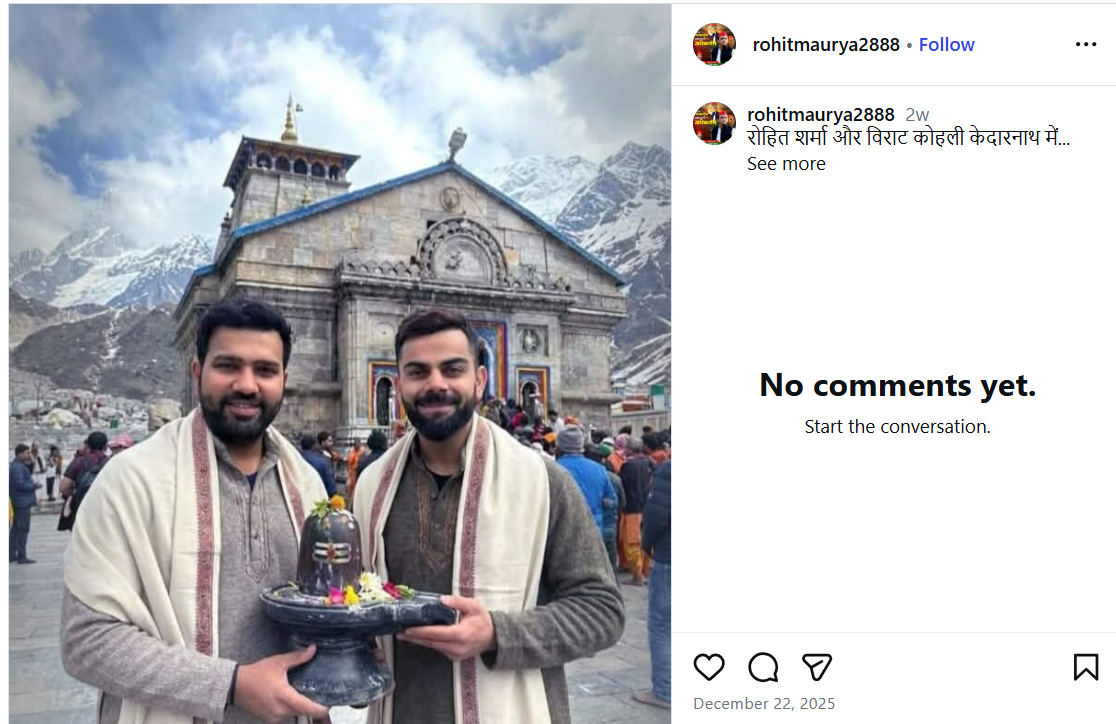

A photo featuring Indian cricketers Virat Kohli and Rohit Sharma is being widely shared on social media. In the image, both players are seen holding a Shivling, with the Kedarnath temple visible in the background. Users sharing the image claim that Virat Kohli and Rohit Sharma recently visited Kedarnath.

However, CyberPeace Foundation’s investigation found the claim to be false. Our verification established that the viral image is not real but has been created using Artificial Intelligence (AI) and is being circulated with a misleading narrative.

The Claim

An Instagram user shared the viral image on December 22, 2025, with the caption stating that Rohit Sharma and Virat Kohli are in Kedarnath. The post has since been widely reshared by other users, who assumed the image to be authentic. Link, archive link, screenshot:

Fact Check

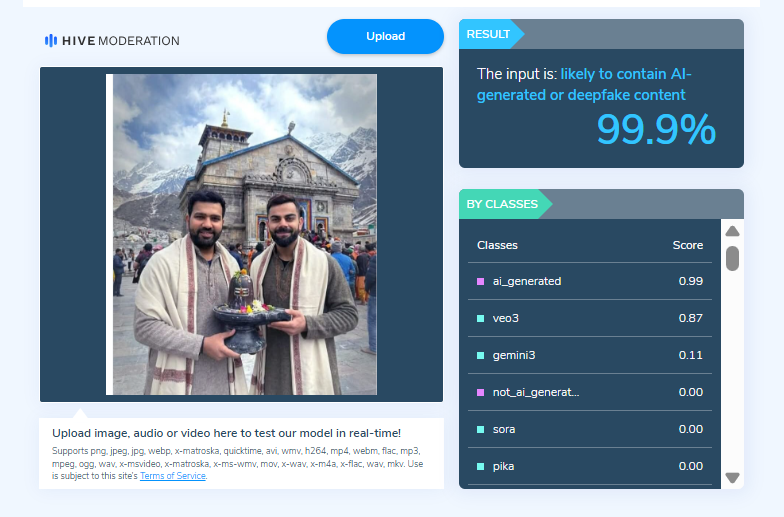

On closely examining the viral image, the Desk noticed visual inconsistencies suggesting that it may be AI-generated. To verify this, the image was scanned using the AI detection tool HIVE Moderation. According to the results, the image was found to be 99 per cent AI-generated.

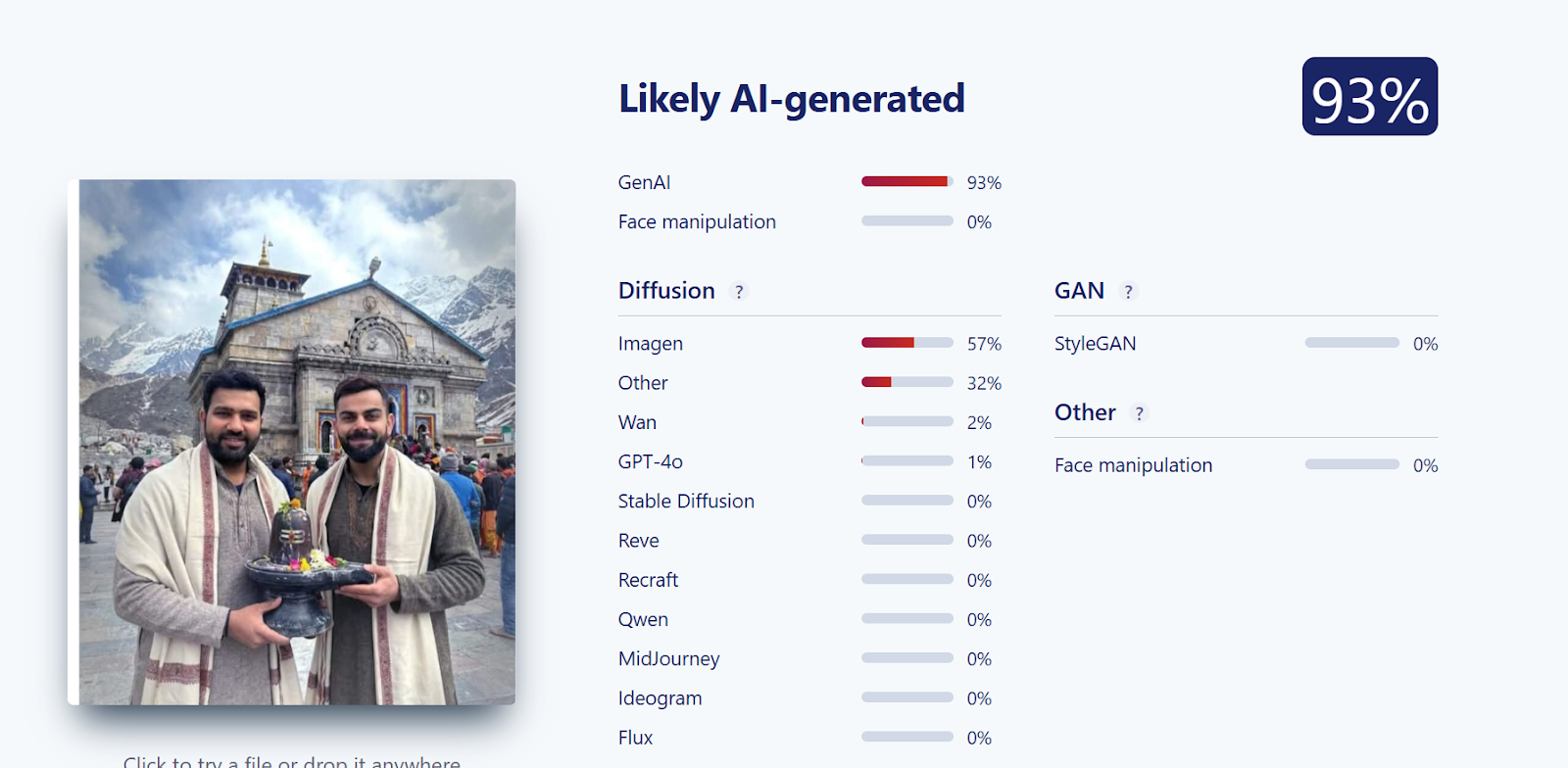

Further verification was conducted using another AI detection tool, Sightengine. The analysis revealed that the image was 93 per cent likely to be AI-generated, reinforcing the findings from the previous tool.

Conclusion

CyberPeace Foundation’s research confirms that the viral image claiming Virat Kohli and Rohit Sharma visited Kedarnath is fabricated. The image has been generated using AI technology and is being falsely shared on social media as a real photograph.

Related Blogs

Introduction

The Department of Telecommunications (DoT) has launched the 'Digital Intelligence Platform (DIP)'and the 'Chakshu' facility on the Sanchar Saathi portal to combat cybercrimes and financial frauds. Union telecom, IT and railways minister Ashwini Vaishnaw announced the initiatives, stating that the government has been working to counter cyber frauds at national, organizational, and individual levels. The Sanchar Saathi portal has successfully tackled such attacks, and the two new portals will further enhance the capacity to check any kind of cyber security threat.

The Digital Intelligence Platform is a secure and integrated platform for real-time intelligence sharing, information exchange, and coordination among stakeholders, including telecom operators, law enforcement agencies, banks, financial institutions, social media platforms, and identity document issuing authorities. It also contains information regarding cases detected as misuse of telecom resources.

The 'Chakshu' facility allows citizens to report suspected fraud communication received over call, SMS, or WhatsApp with the intention of defrauding, such as KYC expiry, bank account/payment wallet/SIM/gas connection/electricity connection, sextortion, impersonations a government official/relative for sending money, and disconnection of all mobile numbers by the Department of Telecommunications.

The launch of these proactive initiatives or steps represents another significant stride by the Ministry of Communications and the Department of Telecommunications in combating cybersecurity threats to citizens' digital assets.

In this age of technology, there is a reason to be concerned about the threats posed by cybercrooks to individuals and organizations. The risk of using digital means for communication, e-commerce, and critical infrastructure has increased significantly. It is important to have proper measures in place to prevent cybercrime and destructive behavior. The Department of Telecommunication has unveiled "Chakshu," a digital intelligence portal aimed at combating cybercrimes. This platform seeks to enhance the country's cyber defense capabilities by providing enforcement agencies with effective tools and actionable intelligence for countering cybercrimes, including financial frauds.

Digital Intelligence Platform (DIP)

Digital Intelligence Platform (DIP) developed by the Department of Telecommunications is a secure and integrated platform for real-time intelligence sharing, information exchange and coordination among the stakeholders i.e. Telecom Service Providers(TSPs), law enforcement agencies (LEAs), banks and financial institutions(FIs), social media platforms, identity document issuing authorities etc. The portal also contains information regarding the cases detected as misuse of telecom resources. The shared information could be useful to the stakeholders in their respective domains. It also works as a backend repository for the citizen-initiated requests on the Sanchar Saathi portal for action by the stakeholders. The DIP is accessible to the stakeholders through secure connectivity, and the relevant information is shared based on their respective roles. However, the platform is not accessible to citizens.

What is Chakshu?

Chakshu, which means “eye” in Hindi, is a new feature on the Sanchar Saathi portal. This citizen-friendly platform allows you to report suspicious communication you receive via calls, SMS, or WhatsApp. “Chakshu” is a new advanced tool to safeguard against modern-day cybercriminal activities. Chakshu is a sophisticated design that uses the latest technologies for assembling and analyzing digital information and provides law enforcement agencies with useful data on what should be done next. Below are some of its attributes.

Here are some examples of what you can report:

- Fraudulent messages claiming your KYC (Know Your Customer)details need to be updated.

- Fraudulent requests to update your bank account, payment wallet, or SIM card details.

- Phishing attempts impersonating government officials or relatives asking for money.

- Fraudulent threats of disconnection of your sim connections.

How Chakshu Aims to crackdown Cybercrime and Financial Frauds

Chakshu is a new tool on the Sanchar Saathi platform that invites individuals to report suspected fraudulent communications received by phone, SMS, or WhatsApp. These fraudulent activities may include attempts to deceive individuals through schemes such as KYC expiry or update requests for bank accounts, payment wallets, SIM cards, gas connections, and electricity connections, sextortion, impersonation of government officials or relatives for financial gain, or false claims of mobile number disconnection by the Department of Telecommunications.

The tool is well-designed and equipped to help the investigators with actionable intelligence and insights, enabling LEAs to conduct targeted investigations on financial frauds and cyber-crimes; the tool helps in gathering a comprehensive data analysis and evidence collection capability by mapping out the connection between individuals, organizations and illicit activities, it, therefore, allows the law enforcement agencies in dismantling criminal activities and help the law enforcement agencies.

Chakshu’s Impact

India has launched Chakshu, a digital intelligence tool that strengthens the country's cybersecurity policy. Chakshu employs modern technology and real-time data analysis to enhance India's cyber defenses. Law enforcement can detect and neutralize possible threats by taking proactive approach to threat analysis and prevention before they become significant crises. Chakshu also improves the resilience of critical infrastructure and digital ecosystems, safeguarding them against cyber-attacks. Overall, Chakshu plays an important role in India's cybersecurity posture and the protection of national interests in the digital era.

Where can Chaksu be accessed?

Chakshu can be accessed through the government's Sanchar Saathi web portal:https://sancharsaathi.gov.in

Conclusion

The launch of the Digital Intelligence Platform and Chakshu facility is a step forward in safeguarding citizens from cybercrimes and financial fraud. These initiatives use advanced technology and stakeholder collaboration to empower law enforcement agencies. The Department of Telecommunications' proactive approach demonstrates the government's commitment to cybersecurity defenses and protecting digital assets, ensuring a safer digital environment for citizens and critical infrastructure.

References

- https://telecom.economictimes.indiatimes.com/news/policy/dot-launches-digital-intelligence-portal-chakshu-facility-to-curb-cybercrimes-financial-frauds/108220814

- https://bankingfrontiers.com/digital-intelligence-platform-launched-to-curb-cybercrime-financial-fraud/

- https://www.business-standard.com/india-news/calcutta-hc-justice-abhijit-gangopadhyay-sends-his-resignation-to-prez-cji-124030500367_1.html

- https://www.the420.in/dip-chakshu-government-launches-powerful-weapons-against-cybercrime/

- https://pib.gov.in/PressReleaseIframePage.aspx?PRID=2011383

Introduction

The Ministry of Communications, Department of Telecommunications notified the Telecommunications (Telecom Cyber Security) Rules, 2024 on 22nd November 2024. These rules were notified to overcome the vulnerabilities that rapid technological advancements pose. The evolving nature of cyber threats has contributed to strengthening and enhancing telecom cyber security. These rules empower the central government to seek traffic data and any other data (other than the content of messages) from service providers.

Background Context

The Telecommunications Act of 2023 was passed by Parliament in December, receiving the President's assent and being published in the official Gazette on December 24, 2023. The act is divided into 11 chapters 62 sections and 3 schedules. The said act has repealed the old legislation viz. Indian Telegraph Act of 1885 and the Indian Wireless Telegraphy Act of 1933. The government has enforced the act in phases. Sections 1, 2, 10-30, 42-44, 46, 47, 50-58, 61, and 62 came into force on June 26, 2024. While, sections 6-8, 48, and 59(b) were notified to be effective from July 05, 2024.

These rules have been notified under the powers granted by Section 22(1) and Section 56(2)(v) of the Telecommunications Act, 2023.

Key Provisions of the Rules

These rules collectively aim to reinforce telecom cyber security and ensure the reliability of telecommunication networks and services. They are as follows:

The Central Government agency authorized by it may request traffic or other data from a telecommunication entity through the Central Government portal to safeguard and ensure telecom cyber security. In addition, the Central Govt. can instruct telecommunication entities to establish the necessary infrastructure and equipment for data collection, processing, and storage from designated points.

● Obligations Relating To Telecom Cybersecurity:

Telecom entities must adhere to various obligations to prevent cyber security risks. Telecommunication cyber security must not be endangered, and no one is allowed to send messages that could harm it. Misuse of telecommunication equipment such as identifiers, networks, or services is prohibited. Telecommunication entities are also required to comply with directions and standards issued by the Central Govt. and furnish detailed reports of actions taken on the government portal.

● Compulsory Measures To Be Taken By Every Telecommunication Entity:

Telecom entities must adopt and notify the Central Govt. of a telecom cyber security policy to enhance cybersecurity. They have to identify and mitigate risks of security incidents, ensure timely responses, and take appropriate measures to address such incidents and minimize their impact. Periodic telecom cyber security audits must be conducted to assess network resilience against potential threats for telecom entities. They must report security incidents promptly to the Central Govt. and establish facilities like a Security Operations Centre.

● Reporting of Security Incidents:

- Telecommunication entities must report the detection of security incidents affecting their network or services within six hours.

- 24 hours are provided for submitting detailed information about the incident, including the number of affected users, the duration, geographical scope, the impact on services, and the remedial measures implemented.

The Central Govt. may require the affected entity to provide further information, such as its cyber security policy, or conduct a security audit.

CyberPeace Policy Analysis

The notified rules reflect critical updates from their draft version, including the obligation to report incidents immediately upon awareness. This ensures greater privacy for consumers while still enabling robust cybersecurity oversight. Importantly, individuals whose telecom identifiers are suspended or disconnected due to security concerns must be given a copy of the order and a chance to appeal, ensuring procedural fairness. The notified rules have removed "traffic data" and "message content" definitions that may lead to operational ambiguities. While the rules establish a solid foundation for protecting telecom networks, they pose significant compliance challenges, particularly for smaller operators who may struggle with costs associated with audits, infrastructure, and reporting requirements.

Conclusion

The Telecom Cyber Security Rules, 2024 represent a comprehensive approach to securing India’s communication networks against cyber threats. Mandating robust cybersecurity policies, rapid incident reporting, and procedural safeguards allows the rules to balance national security with privacy and fairness. However, addressing implementation challenges through stakeholder collaboration and detailed guidelines will be key to ensuring compliance without overburdening telecom operators. With adaptive execution, these rules have the potential to enhance the resilience of India’s telecom sector and also position the country as a global leader in digital security standards.

References

● Telecommunications Act, 2023 https://acrobat.adobe.com/id/urn:aaid:sc:AP:767484b8-4d05-40b3-9c3d-30c5642c3bac

● CyberPeace First Read of the Telecommunications Act, 2023 https://www.cyberpeace.org/resources/blogs/the-government-enforces-key-sections-of-the-telecommunication-act-2023

● Telecommunications (Telecom Cyber Security) Rules, 2024

Introduction

MGM Resorts, which is an international company, has suffered an ongoing cyberattack which led to the shutdown of a number of its computer systems, including its website, in response to a cybersecurity issue. MGM Resorts International is in touch with external cybersecurity experts to resolve the issue since it has affected its entire Computer systems. MGM is a larger entity and operates thousands of hotel rooms across Las Vegas and the United States. MGM Resorts shared about the incident and posted that MGM recently identified a cybersecurity issue affecting some of the Company's systems. Promptly after detecting the issue, they quickly began an investigation with assistance from leading external cybersecurity experts. MGM has notified law enforcement and took prompt action to protect systems and data, including putting down certain systems. MGM further stated that the investigation is ongoing.

The issue

Basic operations such as the online reservation and booking system MGM have been affected and shut down due to the cybersecurity issue faced by a lot of visitors. Since earlier times, casino security has been the state of the art as they were very vulnerable to attacks by robbers and con artists. This is what we have also seen in a lot of movies. In today's time, con artists and robbers are now strengthened by cyber tactics. This is exactly what was seen in the case of the MGM attack.

MGM Resorts is home to best-in-class amenities and facilities for guests, but with the increase in tourist traction, the vulnerabilities and the scope of cyber attacks have also increased. This is also because of open wifis in the establishments and the transition of casinos to e-casinos, thus causing a major shift towards digital and technology-based intervention for better customer experience and streamlining a lot of operations.

How real is the threat?

As reported by MGM Resorts, the following systems were impacted in the cyber security attack:

- Slots Machines: The slot machines placed in the casino suddenly went offline and displayed an error message for the players. Some players who were already using the slot machines lost their bets and were unable to withdraw their winnings.

- Room Keys: Some of the guests reported that the room keys became unresponsive, and in some cases, the replacement keys were also inactive for some time, causing massive chaos at the reception.

- Booking Status: All the bookings in today's time are made online; this was one of the worst-hit segments of the cyber attacks. Most of the bookings made automatically were put on hold, and the confirmations could be made only from the hotel reception, thus causing massive cancelling of the bookings and both the hotel and customers losing out on money.

- MGM App: The official app of MGM Resorts was completely down, thus causing a situation of confusion and panic among the guests. The users also received notifications to speak to different customer care executives, but some of the numbers were unattentive and seemed to be operated by bad actors.

- Data breach: The main focus of the cyber attack was dedicated to committing a data breach. The attack led to the breach of personal data of most of the users registered on the app or on the system of MGM Resorts.

Conclusion

The cyber attack on the tourism industry is a major and growing concern for the industry and its customers. Seeing the volatility of the data and the regular inflow of personal information this makes the hotel's cyber security system a vulnerable choice for bad actors. The cyber attack was no less than a fire sale, where in all the segments of the services offered were impacted. Similar attacks were reported by MGM in 2019 and 2020, and subsequently, the safety measures were also deployed, but the bad actors have hit the resorts chain owners again, in such cases the most paramount defence is having a safe and regularly updated firewall, upskilling of staff for IT issues and attacks, active reporting and investigation mechanisms for assisting the LEAs. In the times of rising cyberattacks, one needs to be critical of their data management and digital footprints. The sooner we adopt safe, secure and resilient cyber hygiene practices, the safer our future will be.

References:

https://www.bleepingcomputer.com/news/security/mgm-resorts-shuts-down-it-systems-after-cyberattack/

https://www.cnbc.com/2023/09/12/mgm-resorts-cybersecurity-incident-forces-system-outage.html