Using incognito mode and VPN may still not ensure total privacy, according to expert

SVIMS Director and Vice-Chancellor B. Vengamma lighting a lamp to formally launch the cybercrime awareness programme conducted by the police department for the medical students in Tirupati on Wednesday.

An awareness meet on safe Internet practices was held for the students of Sri Venkateswara University University (SVU) and Sri Venkateswara Institute of Medical Sciences (SVIMS) here on Wednesday.

“Cyber criminals on the prowl can easily track our digital footprint, steal our identity and resort to impersonation,” cyber expert I.L. Narasimha Rao cautioned the college students.

Addressing the students in two sessions, Mr. Narasimha Rao, who is a Senior Manager with CyberPeace Foundation, said seemingly common acts like browsing a website, and liking and commenting on posts on social media platforms could be used by impersonators to recreate an account in our name.

Turning to the youth, Mr. Narasimha Rao said the incognito mode and Virtual Private Network (VPN) used as a protected network connection do not ensure total privacy as third parties could still snoop over the websites being visited by the users. He also cautioned them tactics like ‘phishing’, ‘vishing’ and ‘smishing’ being used by cybercriminals to steal our passwords and gain access to our accounts.

“After cracking the whip on websites and apps that could potentially compromise our security, the Government of India has recently banned 232 more apps,” he noted.

Additional Superintendent of Police (Crime) B.H. Vimala Kumari appealed to cyber victims to call 1930 or the Cyber Mitra’s helpline 9121211100. SVIMS Director B. Vengamma stressed the need for caution with smartphones becoming an indispensable tool for students, be it for online education, seeking information, entertainment or for conducting digital transactions.

Related Blogs

Introduction

With the modernization of automobiles, so have the methods employed by criminals who seek to commit thefts. The old method of smashing a car window or bypassing an engine lock is no longer prevalent. Modern car thieves employ cloning techniques for keys and digital signals, and sophisticated methods to commit crimes without any traces left behind. In an era where intelligence is crucial, the forensic examination of car keys has become an indispensable tool for investigations, providing clues buried within ordinary car keys.

The Need for Car Key Forensics Today

Daily, thousands of cars worth millions are being hacked or stolen around the globe. The shocking thing is that most of these hacks do not have any sign of breakage or forced entry. It is because the thieves use vulnerabilities in the wireless key systems to unlock the vehicles without leaving any trace behind. Therefore, car-key forensics has now become more important than ever before.

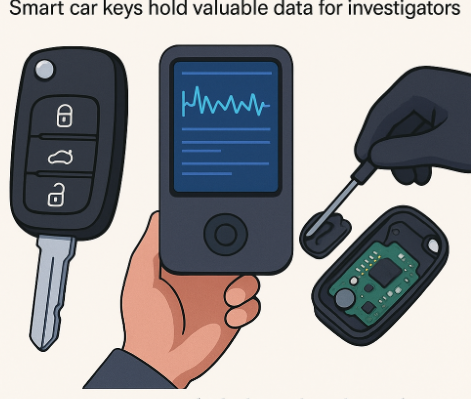

Forensic Value of Smart Key

The smart key is not just comprised of locking and unlocking features for vehicles. Actually, it operates as a miniature computer within the key itself. Information such as pairing records, frequency of use, or the last instance that the key was used to unlock something could be contained in smart keys, thus offering evidence of any criminal activity conducted using these items.

Patterns of Vehicle Theft

Through the examination of the chip in a key, one will be able to establish whether the key was legally programmed or had been tampered with by some other means. Such forensics become more important when trying to detect and monitor any car theft rings, which employ cloning machines or software.

Confirming Ownership and Authenticity

In cases involving insurance claims or fraud, smart key data can help confirm whether the person making a claim actually owned or used the car during the incident. It’s digital proof that goes beyond what paperwork or statements can show.

Strengthening Legal Cases

When brought to court, data doesn’t lie. A properly handled forensic examination of a car key can provide hard evidence — the kind that holds up under questioning and supports or disproves claims with complete accuracy. In many cases, this small device becomes the most reliable witness in the investigation.

A Real-Life Example

Consider the following scenario: An expensive SUV is stolen from a parking lot secured with security surveillance. No one is captured on camera and there is no sign of forced entry. After days of investigation, the police end up arresting a suspect with a smart key.

During forensic analysis of the smart key, it is revealed that:

- The transponder has an ID number which can be matched against the immobilizer installed in the vehicle.

- The rolling code counter has been incremented in such a way that the date corresponds with the report of theft of the vehicle.

- The extracted information helps match the pair timestamp of the key with the particular make and model of the vehicle.

All this from a single piece of evidence – the smart key.

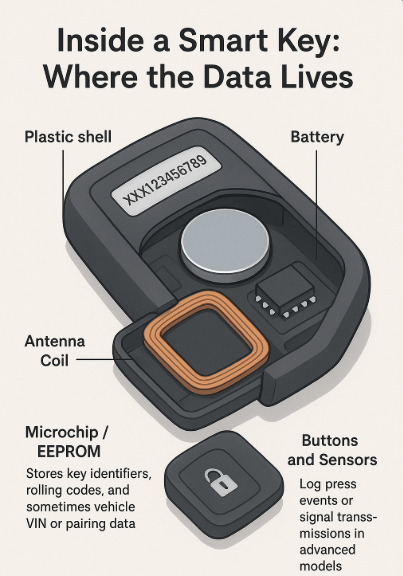

Inside a Smart Key: Where the Data Lives

An average smart key is not only a remote but also a multi-level set of data carriers:

- Plastic Shell– could include serial numbers and information on the manufacture.

- Battery – helps calculate the time of using the key or detect any modifications.

- Antenna Coil – sends encrypted information to the immobilizer of a car. Draw the picture of this element.

- Microchip / EEPROM – holds key identification code, rolling code, VIN number, and/or other information of the vehicle.

- Buttons / sensors – could record any pressing or transmission actions in some cases.

All the little devices above, once properly studied via forensics software, provide valuable information.

How the Investigator Uncovers the Truth

In relation to investigating the true story about a vehicle, it is no different than handling other forms of digital evidence, as forensic analysts treat the car key in the same way. It is nothing more than an encrypted device in your hands, and using special techniques, they are able to reveal the information contained within the device.

Some of the methods used by modern forensic laboratories include:

1. Intercepting Radio Signals

Any intelligent key transmits radio signals to communicate with a car. Specialists employ advanced antennas and radio frequency (RF) analysers to catch and analyse them. This way, it is possible to understand the interaction between the key and the car – how often was it used, what kind of authentication procedure takes place, and if the signal matches the car’s one or has been forged somehow.

2. Checking Out the Key’s Brain (Analysis of EEPROM)

There is always a special chip on the key that is responsible for its activity. The chip contains an important memory module (EEPROM – Electrically Erasable Programmable Read-Only Memory), which holds various data, including key IDs and rolling codes. It can be carefully retrieved via advanced tools. Thus, it is possible to determine whether somebody tried to tamper with the key.

3. The Correlation between the Key and Car’s Information

The information stored inside the key will not be used separately since investigators will correlate the key's data with that of the vehicle itself (ECU and immobilizer). If the two kinds of information coincide, the investigation may conclude that the key belongs to the vehicle. Otherwise, this may mean either cloning or tampering.

4. Identifying the Tampering and Cloning Evidence

As was mentioned above, thieves sometimes resort to using unlawful programming devices for duplicating smart car keys. In order to detect possible cloning, experts examine the key using various diagnostic devices to find out whether the keys were modified by changing the encryption code, frequencies, and hardware itself.

At the end of the process, some kind of miracle occurs because of the following: all actions committed with this particular key become documented, recorded inside the device itself. Even if someone tries to hide anything or remove any information concerning this particular incident, there will always remain some data.

Car Key Forensics in the Future

The evolution of cars to connect with other devices and adopt self-driving technologies requires new investigative methods to be used for vehicle-related crimes. Advanced car keys or smartphone apps that replace physical keys will likely incorporate biometric authentication, cloud integration, or blockchain records of key activity in the near future.

Such improvements will pose several threats and offer many benefits:

- Artificial intelligence tools can determine if the car key is cloned based on its behaviour pattern.

- Blockchain validation ensures all key-related activities are recorded and cannot be altered.

- Cyber-forensic protocols will become increasingly necessary for investigating criminal activity related to vehicles.

Car key forensics technology will not only allow solving crimes but may become instrumental in crime prevention.

Conclusion

A car key in this era is more than just an unlocking mechanism; it is a miniature data storage facility, which can yield information about the user, intentions, and access rights. The more cars become technologically advanced, the more the examination of smart keys becomes necessary as part of correlating physical evidence with digital investigation. It clearly indicates how small objects such as keys can play pivotal roles in cracking cases.

Executive Summary

Amid the ongoing tensions involving the United States, Israel, and Iran, a video of a cargo ship engulfed in flames is being widely shared across social media platforms. The clip shows a vessel burning intensely at sea, with users claiming that Iran targeted the ship with a drone for attempting to cross the Strait of Hormuz without permission. Some users have also claimed that the destroyed vessel was a Pakistani-flagged oil tanker hit by Iranian missiles. However, research by CyberPeace found the claim to be false. Our verification also reveals that the viral video is being misrepresented.

Claim

Social media users, including an X (formerly Twitter) account named “IranDefenceForce,” shared the video claiming that Iran targeted an oil tanker in the Strait of Hormuz for allegedly violating restrictions.

Fact Check

A keyword-based news search led us to multiple credible reports mentioning a statement by Iran’s Foreign Minister Abbas Araghchi. According to reports, Iran had allowed ships from “friendly countries” including India, China, Russia, Iraq, and Pakistan to pass through the Strait of Hormuz.

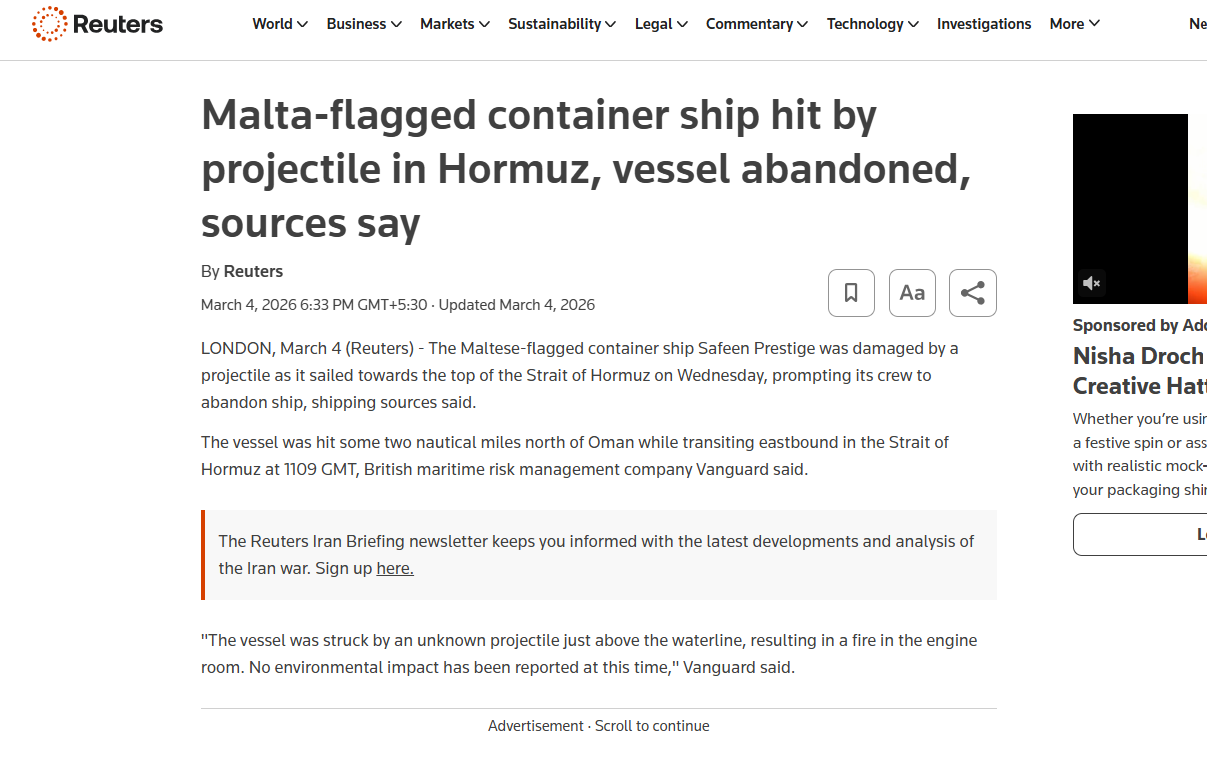

A March 26, 2026 report by The Hindu stated that Araghchi also emphasized Iran’s assertion of sovereignty over the strategic waterway connecting the Persian Gulf and the Gulf of Oman. The same statement was also shared via the official X handle of the Iranian Consulate in Mumbai. During a frame-by-frame analysis of the viral video, we noticed the word “SAFEEN” written on a part of the ship. Using this clue, we conducted a targeted news search and found a report by Reuters dated March 4, 2026.

According to the report, a Malta-flagged container ship named Safeen Prestige was damaged in an attack while heading toward the Strait of Hormuz. Shipping sources cited in the report stated that the vessel was struck around 1109 GMT while sailing eastward, approximately two nautical miles north of Oman. The ship had reportedly departed from Sharjah Port in the United Arab Emirates but was damaged before reaching its destination. Its last known location was in the Persian Gulf. Additionally, earlier this month, another cargo vessel named Mayuri Naree was also attacked near Iran’s Qeshm Island. As per Reuters, an explosion caused a fire in the engine room, after which 20 crew members were rescued by the Omani navy, while three remained missing.

Conclusion

The viral video does not show Iran targeting a Pakistani oil tanker for violating restrictions in the Strait of Hormuz. In reality, the clip features the Malta-flagged container ship Safeen Prestige, which was damaged in an unidentified attack in the Persian Gulf. The claim being circulated on social media is misleading.

Introduction

Human Trafficking has been a significant concern and threat to society for a very long time. The aspects of our physical safety also have been influenced by human traffickers and the modus operandi they have adopted and deployed over the years. We are always cautious of younger children in regard to trafficking whenever we go out to crowded or unknown places. This concern and threat have also migrated to cyberspace and now pose new and different tangents of threats. These crimes are committed using technology and are further substantiated by different cybercrimes.

What is Cyber-Enabled Human Trafficking?

Cyber-enabled human trafficking is the new evolution of human trafficking in the digital age. Bad actors lure the victims via the internet and use social engineering to exploit their vulnerabilities to get them into their traps. In today's time, crime is often substantiated in lieu of fake job offers and a better lifestyle in new and major metropolitan cities. Now this crime has gone beyond the geographical boundaries of our nation, and often the victims end up in remote locations in the Middle East or South East Asia.

Cybercrime Hubs in Myanmar

The reports have indicated that a lot of trafficked victims are taken down to various cybercrime hubs in Myanmar. The victims are often lured on the pretext of job offers overseas, which pay handsomely. The victims make their way into the foreign nation but are then cornered by the bad actors and are segregated and taken into different hubs. The victims are often school graduates and seek basic jobs for their earnings. The victims are taken into Cybercrime hubs which Chinese syndicate criminals allegedly run.The victims are kept in tough conditions, beaten up, and held captive in remote jungles. Once the victim has lost hope, the criminals train them to commit cyber frauds like phishing. The victims are given scripts and mobile numbers to commit cybercrimes. The victims are given targets to ensure their survival, and due to the dark and threatening conditions, the victims just give up on the demands just to remain alive. Some of the victims make their way back home as well, but that is after 6-7 years of such constant torture and abuse to commit cybercrimes. The majority of such survivors face trouble seeking legal assistance as the criminals are almost impossible to track, thus making redressal for crimes and rehabilitation for survivors tough.

How to stay safe?

The criminals in such acts often target the vulnerable sector of the population, these people generally hail from tier 3 towns and rural areas. These victims aspire for a better life and earning opportunities, and due to less education and minimal awareness, they fail to see the traps set by the victims. The population at large can deploy the following measures and safe practices to avoid such horrific threats-

- Avoid Stranger interaction: Avoid interacting with strangers on any online platform or portal. Social media sites are the most used platforms by bad actors to make contact with potential victims.

- Do not Share: Avoid sharing any personal information with anyone online, and avoid filling out third-party surveys/forms seeking personal information.

- Check, Check and Recheck: Always be on alert for threats and always check and cross-check any link or platform you use or access.

- Too good to be true: If something feels like Too good to be true, it probably is and hence avoid falling for attractive job offers and work-from-home opportunities on social media platforms.

- Know your helplines: One should know the helpline numbers to make sure to exercise the reporting duty and also encourage your family members to report in case of any threat or issue.

- Raise Awareness: It is the duty of all netizens to raise awareness in society to arm more people against cybercrimes and fraud.

Conclusion

The name of cybercriminals is spreading all across the ecosystems, and now the technology is being deployed by such bad actors to even substantiate physical crimes. We need to be on alert and remain aware of such crimes and the modus Operandi of cyber criminals. Awareness and education are our best weapons to combat the threats and issues of cyber-enabled human trafficking, as the criminals feed on our vulnerabilities, lets eradicate them for once and for all and work towards creating a wholesome safe cyber ecosystem for all.https://www.scmp.com/week-asia/politics/article/3228543/inside-chinese-run-crime-hubs-myanmar-are-conning-world-we-can-kill-you-here